Understanding and Mitigating the Impact of Load Imbalance in

42 Slides3.55 MB

Understanding and Mitigating the Impact of Load Imbalance in the Memory Caching Tier - Yu-Ju Jong and Mithuna Thottethodi Purdue University Presented by Rajath Subramanyam for CS525 Spring 2014

Agenda Motivation Background System design Evaluation Discussion

Motivation Distributed memory caching systems have gained huge popularity “memcached” is the most popular distributed memory caching system It offers tremendous performance improvements compared to architectures that necessitate direct interaction with the storage layer

Motivation Load imbalance in the memory caching tier can cause severe performance degradation “Skewed key popularity” can cause significant degradation in the tail latency The paper presents a memcached variant called “SPORE” which uses self-adapting, popularity-based replication to mitigate load imbalance

Agenda Motivation Background System design Evaluation Discussion

memcached Very simple In-memory distributed hash table service “Hot” data from DB stored in cache Memory caching tier comprises of a pool of memcached servers each of which is a standalone server that need not be aware of the other servers in the pool

memcached users and services *Disclaimer: All images from World Wide Web

memcached access characteristics Read-mostly workloads Writes in order to be durable have to be made to the underlying (non-volatile) data store Facebook reports that reads are 2x times writes in their workloads Other studies have shown that writes constitute mere 3-12% of all accesses in large scale key-value stores

Load Imbalance Two factors influence load on each server in the memcached server pool: 1) The number of keys mapped to that server 2) Popularity of those keys

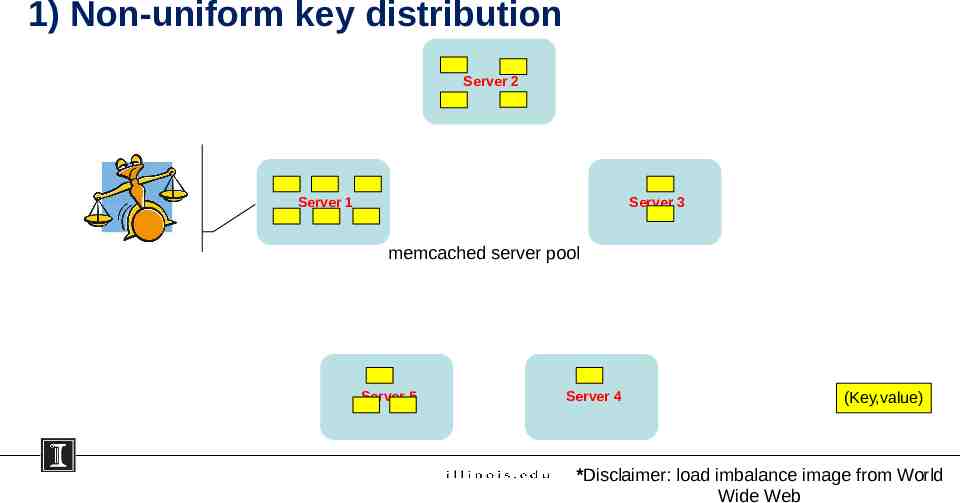

1) Non-uniform key distribution Server 2 Server 1 Server 3 memcached server pool Server 5 Server 4 (Key,value) *Disclaimer: load imbalance image from World Wide Web

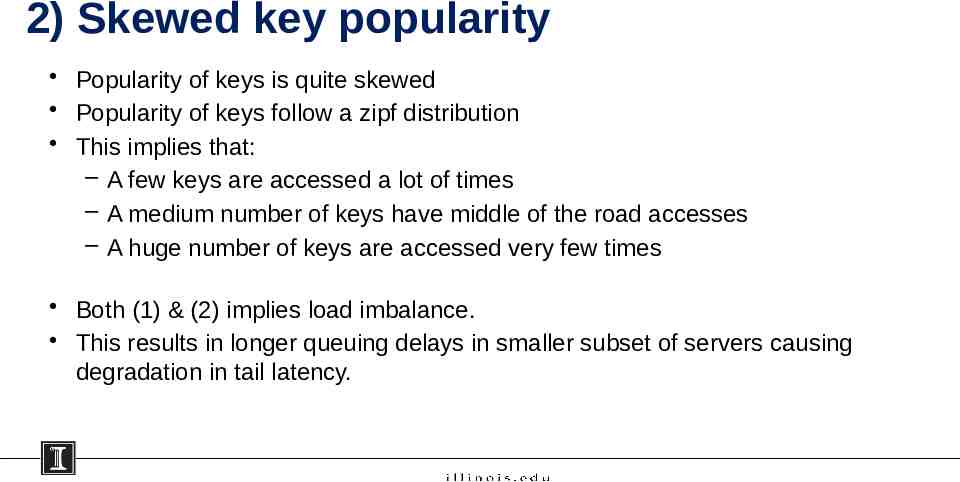

2) Skewed key popularity Popularity of keys is quite skewed Popularity of keys follow a zipf distribution This implies that: – A few keys are accessed a lot of times – A medium number of keys have middle of the road accesses – A huge number of keys are accessed very few times Both (1) & (2) implies load imbalance. This results in longer queuing delays in smaller subset of servers causing degradation in tail latency.

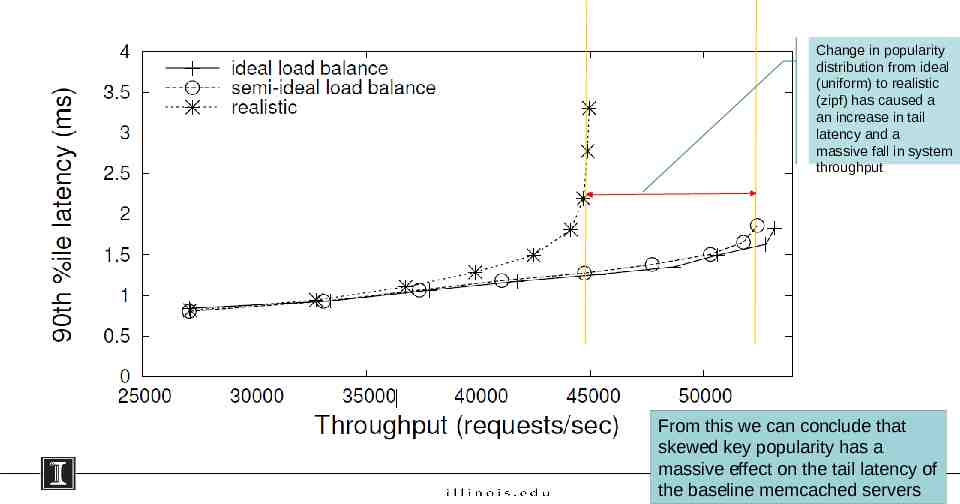

Change in popularity distribution from ideal (uniform) to realistic (zipf) has caused a an increase in tail latency and a massive fall in system throughput From this we can conclude that skewed key popularity has a massive effect on the tail latency of the baseline memcached servers

Agenda Motivation Background System design Evaluation Discussion

SPORE design SPORE stands for Self-adapting Popularity-based Replication When there are no popular keys then each key is associated with only one server called “Home Server” When mostly-read tuples become popular they are replicated on “Shadow Servers” Replica count ‘γ’: number of replicas of the tuple not including the original tuple *Disclaimer: Dilbert comic strip from World Wide Web

Several questions arise at this stage ? *Disclaimer: All images from World Wide Web

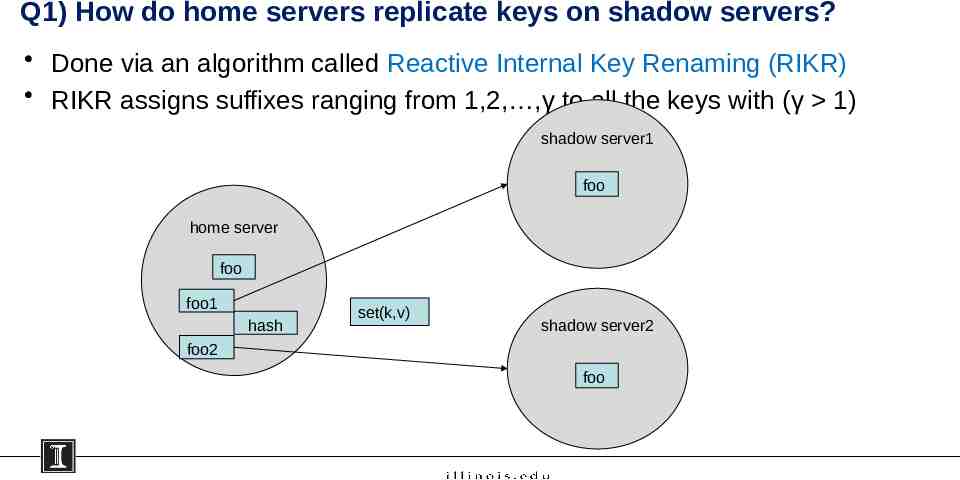

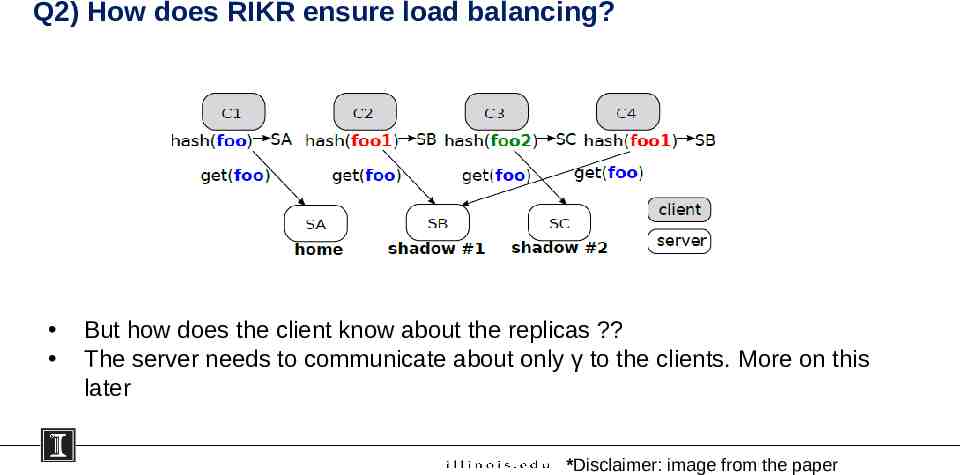

Q1) How do home servers replicate keys on shadow servers? Done via an algorithm called Reactive Internal Key Renaming (RIKR) RIKR assigns suffixes ranging from 1,2, ,γ to all the keys with (γ 1) shadow server1 foo home server foo foo1 hash set(k,v) shadow server2 foo2 foo

Q2) How does RIKR ensure load balancing? But how does the client know about the replicas ? The server needs to communicate about only γ to the clients. More on this later *Disclaimer: image from the paper

Consistency Model SPORE provides only eventual consistency But SPORE offers “read monotonicity” This means: – read from one client should always go to the same replica to ensure read monotonicity; – while write requests must always go to the home server to ensure sequential write order

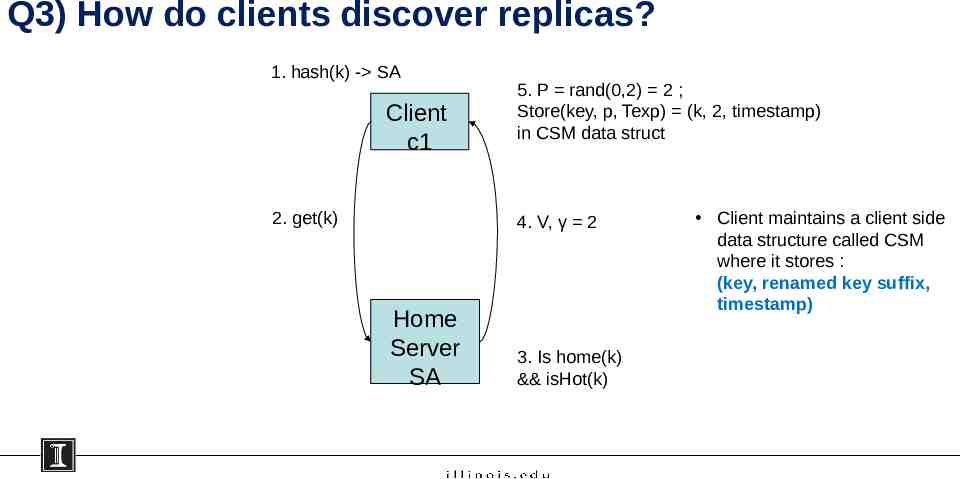

Q3) How do clients discover replicas? 1. hash(k) - SA Client c1 2. get(k) 5. P rand(0,2) 2 ; Store(key, p, Texp) (k, 2, timestamp) in CSM data struct 4. V, γ 2 Home Server SA 3. Is home(k) && isHot(k) Client maintains a client side data structure called CSM where it stores : (key, renamed key suffix, timestamp)

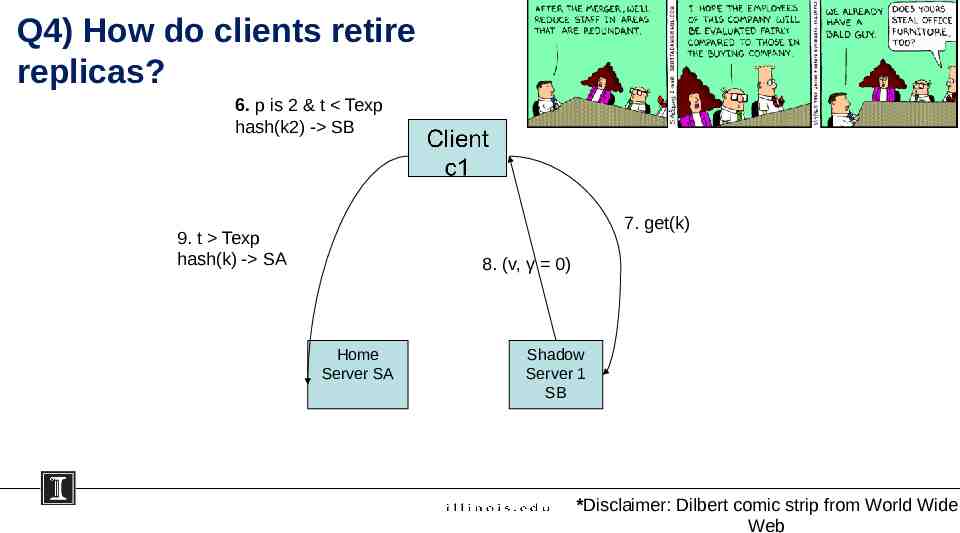

Q4) How do clients retire replicas? 6. p is 2 & t Texp hash(k2) - SB 7. get(k) 9. t Texp hash(k) - SA 8. (v, γ 0) Home Server SA Shadow Server 1 SB *Disclaimer: Dilbert comic strip from World Wide Web

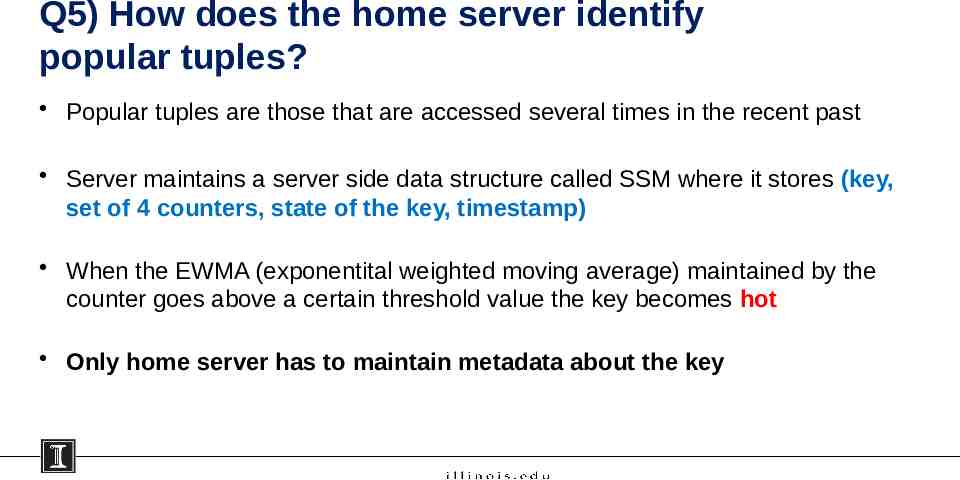

Q5) How does the home server identify popular tuples? Popular tuples are those that are accessed several times in the recent past Server maintains a server side data structure called SSM where it stores (key, set of 4 counters, state of the key, timestamp) When the EWMA (exponentital weighted moving average) maintained by the counter goes above a certain threshold value the key becomes hot Only home server has to maintain metadata about the key

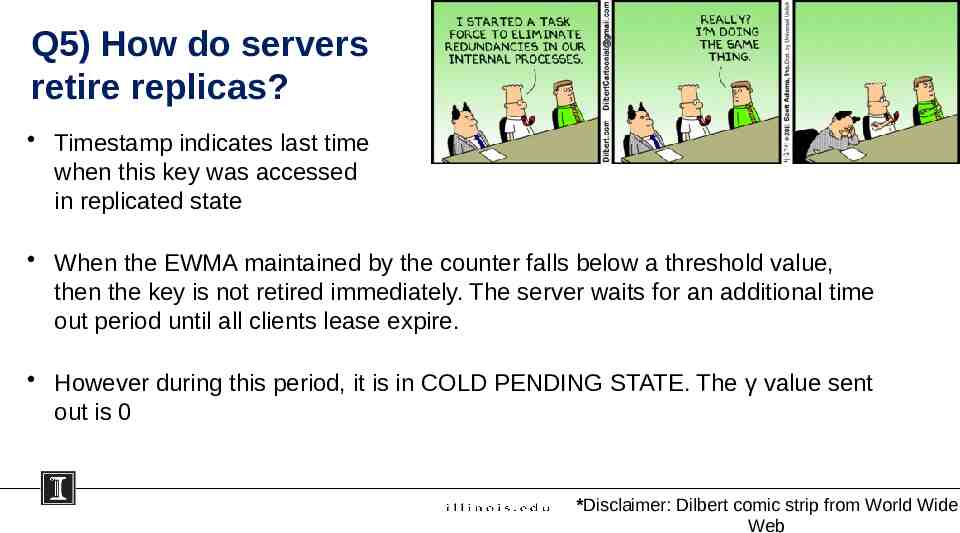

Q5) How do servers retire replicas? Timestamp indicates last time when this key was accessed in replicated state When the EWMA maintained by the counter falls below a threshold value, then the key is not retired immediately. The server waits for an additional time out period until all clients lease expire. However during this period, it is in COLD PENDING STATE. The γ value sent out is 0 *Disclaimer: Dilbert comic strip from World Wide Web

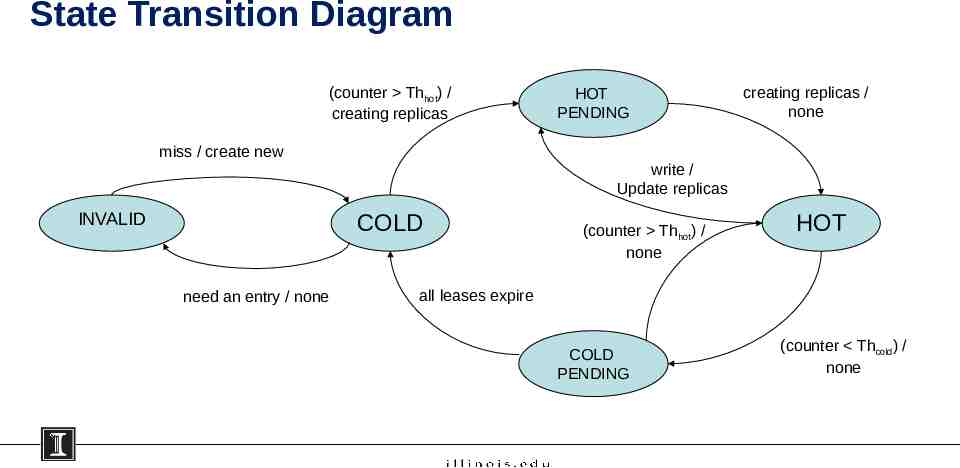

State Transition Diagram (counter Thhot) / creating replicas miss / create new INVALID creating replicas / none write / Update replicas COLD need an entry / none HOT PENDING (counter Thhot) / none HOT all leases expire COLD PENDING (counter Thcold) / none

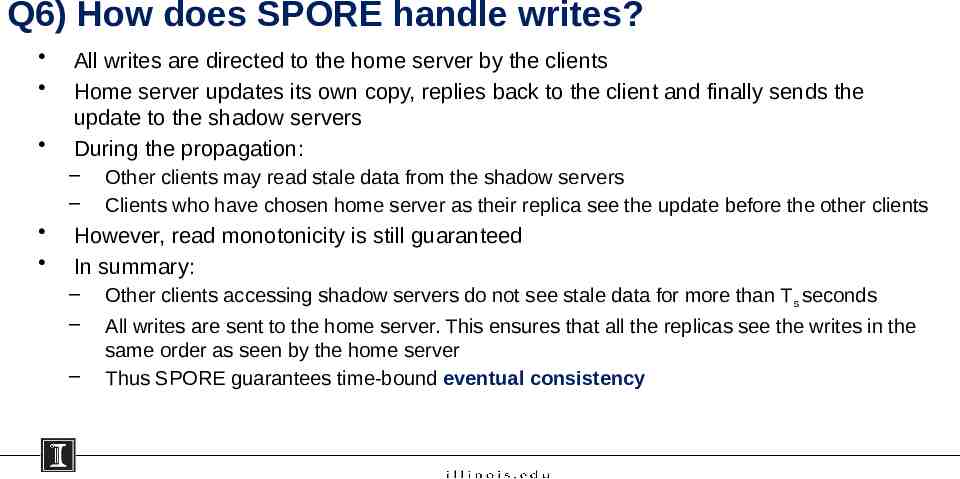

Q6) How does SPORE handle writes? All writes are directed to the home server by the clients Home server updates its own copy, replies back to the client and finally sends the update to the shadow servers During the propagation: – – Other clients may read stale data from the shadow servers Clients who have chosen home server as their replica see the update before the other clients However, read monotonicity is still guaranteed In summary: – – – Other clients accessing shadow servers do not see stale data for more than Ts seconds All writes are sent to the home server. This ensures that all the replicas see the writes in the same order as seen by the home server Thus SPORE guarantees time-bound eventual consistency

SE – SPORE – another variant SE - SPORE (standalone equivalent SPORE) provides atomic writes in the memory caching tier Essentially, the home server waits for all replica updates before any node / replica can serve the value to a reader. This is done using a two – phase atomic – update protocol This makes write operation 2 times slower (for 1 to 2 replicas)

Optimizations Sampling Hotspot Triggering Mixed read/write workloads

Server Failures Server failures are complicated in baseline memcached system and cause data staleness Even gutter pools cannot prevent data staleness In SPORE, home server failures causes orphans Network partition wreck havoc In summary, SPORE with or without replicas cannot avoid data staleness This means replication purely serves load balancing and not Fault Tolerance *Disclaimer: Dilbert comic strip from World Wide Web

Agenda Motivation Background System design Evaluation Discussion

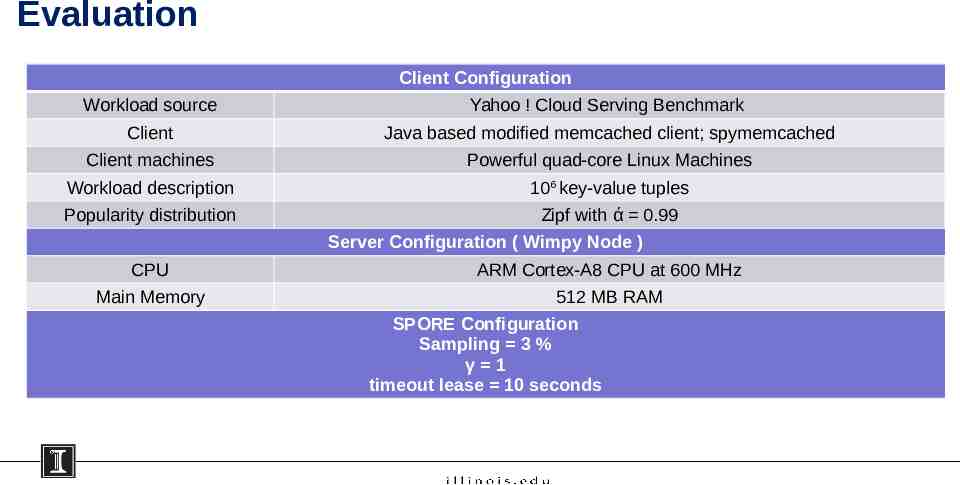

Evaluation Client Configuration Workload source Yahoo ! Cloud Serving Benchmark Client Java based modified memcached client; spymemcached Client machines Powerful quad-core Linux Machines Workload description 106 key-value tuples Popularity distribution Zipf with ά 0.99 Server Configuration ( Wimpy Node ) CPU ARM Cortex-A8 CPU at 600 MHz Main Memory 512 MB RAM SPORE Configuration Sampling 3 % γ 1 timeout lease 10 seconds

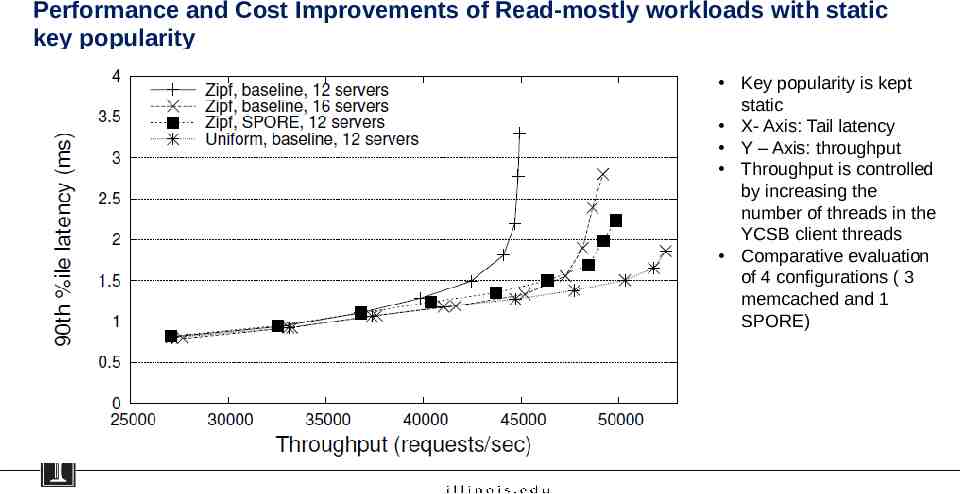

Performance and Cost Improvements of Read-mostly workloads with static key popularity Key popularity is kept static X- Axis: Tail latency Y – Axis: throughput Throughput is controlled by increasing the number of threads in the YCSB client threads Comparative evaluation of 4 configurations ( 3 memcached and 1 SPORE)

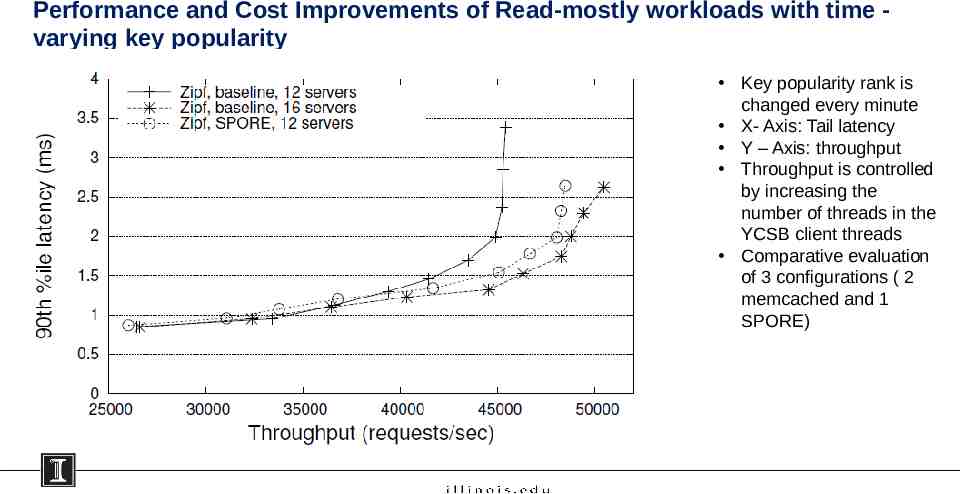

Performance and Cost Improvements of Read-mostly workloads with time varying key popularity Key popularity rank is changed every minute X- Axis: Tail latency Y – Axis: throughput Throughput is controlled by increasing the number of threads in the YCSB client threads Comparative evaluation of 3 configurations ( 2 memcached and 1 SPORE)

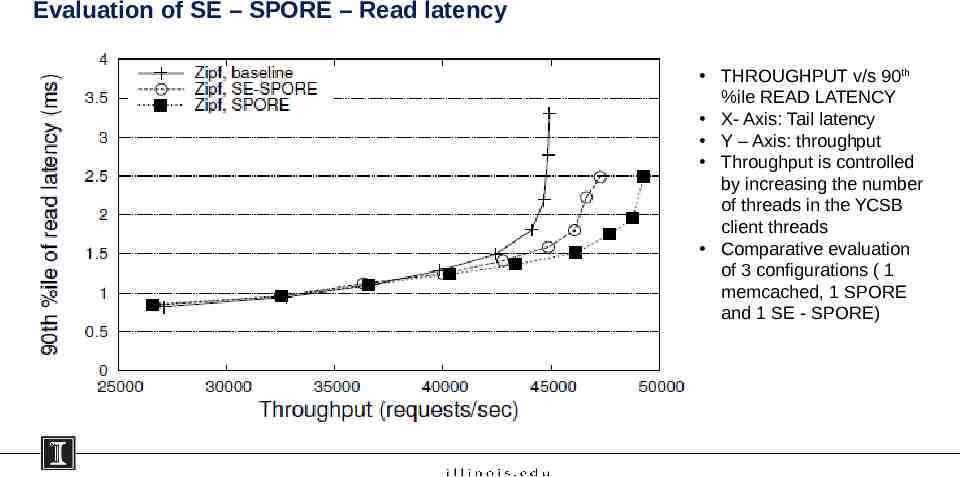

Evaluation of SE – SPORE – Read latency THROUGHPUT v/s 90th %ile READ LATENCY X- Axis: Tail latency Y – Axis: throughput Throughput is controlled by increasing the number of threads in the YCSB client threads Comparative evaluation of 3 configurations ( 1 memcached, 1 SPORE and 1 SE - SPORE)

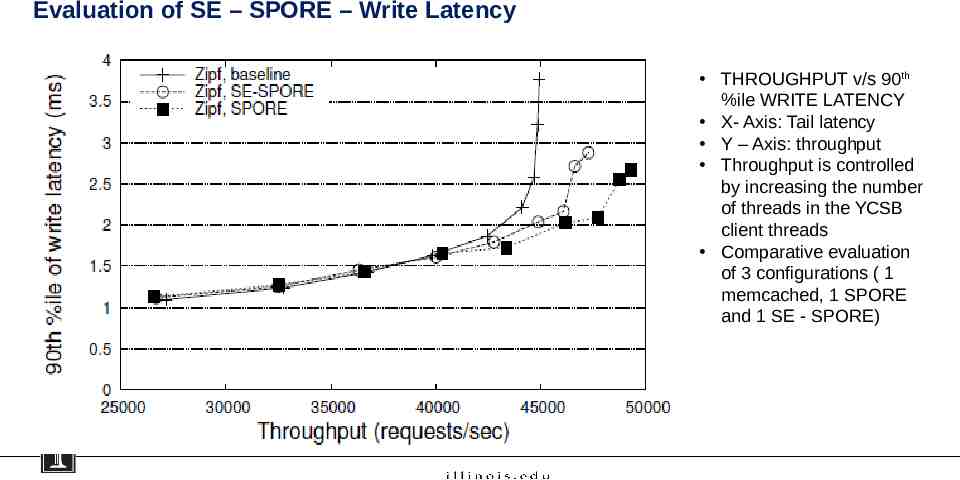

Evaluation of SE – SPORE – Write Latency THROUGHPUT v/s 90th %ile WRITE LATENCY X- Axis: Tail latency Y – Axis: throughput Throughput is controlled by increasing the number of threads in the YCSB client threads Comparative evaluation of 3 configurations ( 1 memcached, 1 SPORE and 1 SE - SPORE)

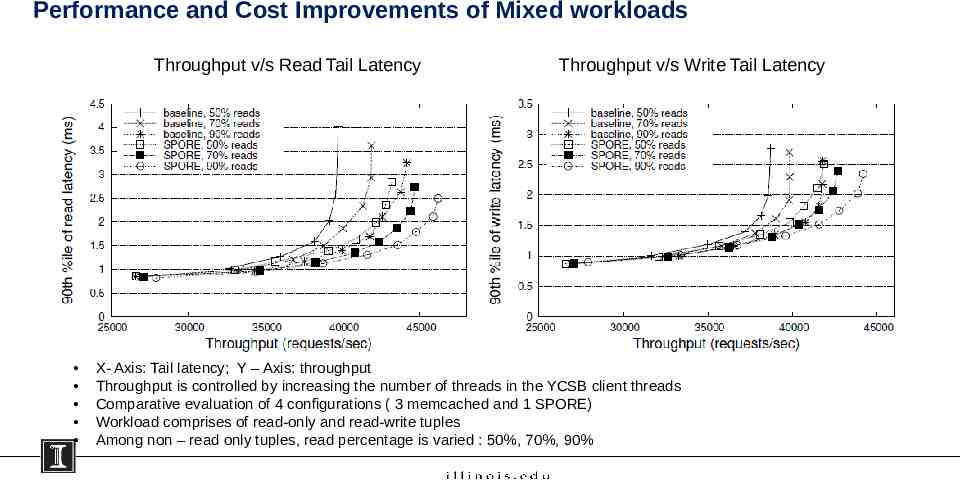

Performance and Cost Improvements of Mixed workloads Throughput v/s Read Tail Latency Throughput v/s Write Tail Latency X- Axis: Tail latency; Y – Axis: throughput Throughput is controlled by increasing the number of threads in the YCSB client threads Comparative evaluation of 4 configurations ( 3 memcached and 1 SPORE) Workload comprises of read-only and read-write tuples Among non – read only tuples, read percentage is varied : 50%, 70%, 90%

Limitations of SPORE Space overhead Latency overhead Communication overhead

Agenda Motivation Background System design Evaluation Discussion

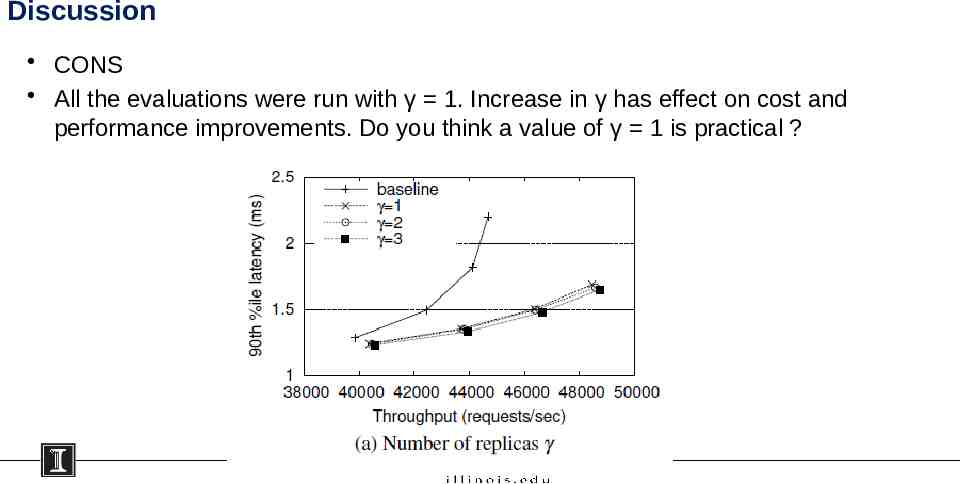

Discussion CONS All the evaluations were run with γ 1. Increase in γ has effect on cost and performance improvements. Do you think a value of γ 1 is practical ?

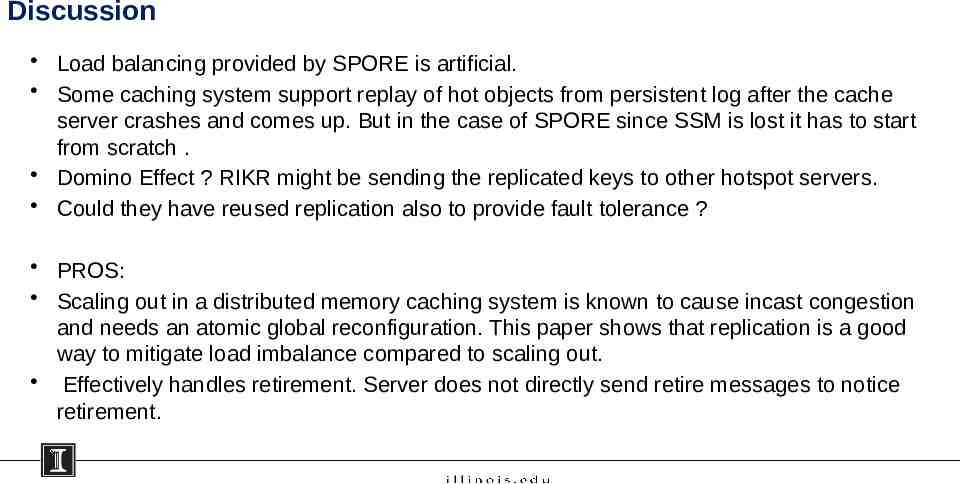

Discussion Load balancing provided by SPORE is artificial. Some caching system support replay of hot objects from persistent log after the cache server crashes and comes up. But in the case of SPORE since SSM is lost it has to start from scratch . Domino Effect ? RIKR might be sending the replicated keys to other hotspot servers. Could they have reused replication also to provide fault tolerance ? PROS: Scaling out in a distributed memory caching system is known to cause incast congestion and needs an atomic global reconfiguration. This paper shows that replication is a good way to mitigate load imbalance compared to scaling out. Effectively handles retirement. Server does not directly send retire messages to notice retirement.

Thank you !

BACKUP SLIDES

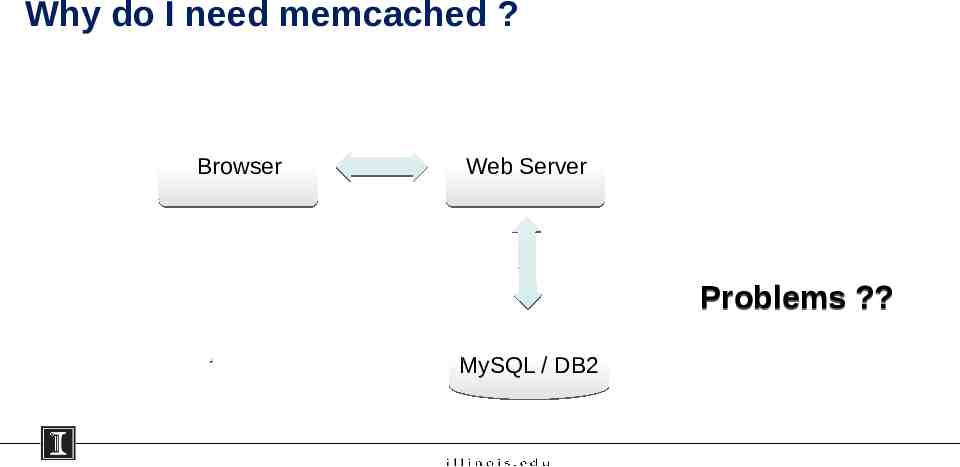

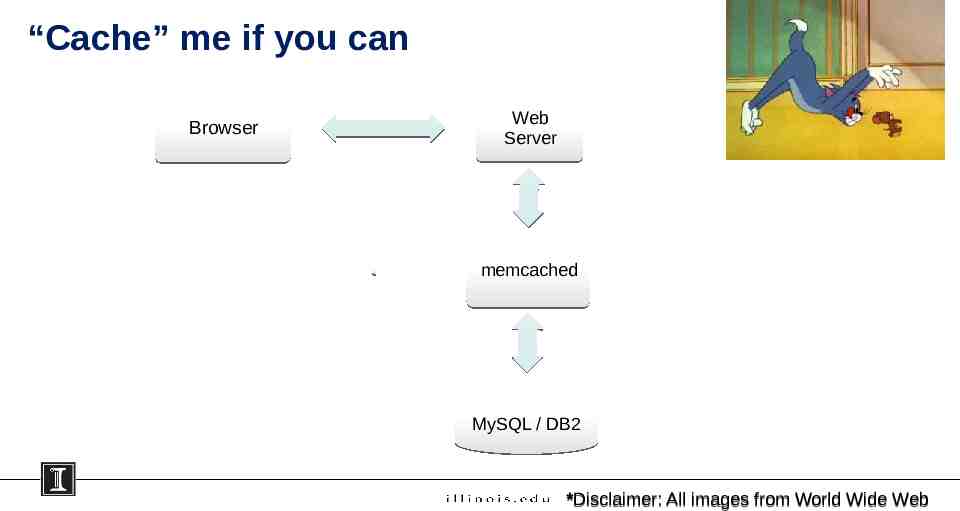

Why do I need memcached ? Browser Web Server Problems ? MySQL / DB2

“Cache” me if you can Browser Web Server memcached MySQL / DB2 *Disclaimer: All images from World Wide Web