Record Linkage/ Duplicate Elimination Sunita

35 Slides1.41 MB

Record Linkage/ Duplicate Elimination Sunita Sarawagi [email protected]

The de-duplication problem Given a list of semi-structured records, find all records that refer to a same entity Example applications: Data warehousing: merging name/address lists Entity: a) Person b) Household Automatic citation databases (Citeseer): references Entity: paper

Example Link together people from several data sources DERYCK ,D ,SOZA SOUZA ,D ,D ,, GORA VILLA CHAFEKAR ,RAMCHANDRA . VIMAN NAGAR ,03 ,GERA VILLA ,VIMAN NAGAR PUNE ,411014 ,1 411014 , Taxpayers Duplicates: Land records Passport Transport Telephone SOUZA ,D ,D ,,GORA VILLA ,,VIMAN NAGAR ,,,411014 , DERYCK ,D ,SOZA ,03 ,GERA VILLA ,,VIMAN NAGAR PUNE, 411014 Non-duplicates: CHAFEKAR ,RAMCHANDRA ,DAMODAR ,SHOP 8 ,H NO 509 NARAYAN PETH PUNE 411030 CHITRAV ,RAMCHANDRA ,D ,FLAT 5 ,H NO 2105 SADASHIV PETH PUNE 411 030

Challenges Errors and inconsistencies in data Spotting duplicates might be hard as they may be spread far apart: may not be group-able using obvious keys Domain-specific Existing manual approaches require retuning with every new domain

How are Such Problems Created? Human factors Incorrect data entry Ambiguity during data transformations Application factors Erroneous applications populating databases Faulty database design (constraints not enforced) Obsolence Real-world is dynamic

Database level linkage Authors Authors Titles Patent database (XML) Inventor Title Assignee Abstract Inventor list Computer science publications (DBLP) Probabilistic links Exploit various kinds of information in decreasing order of information content and increasing computation overhead Authors Titles Abstracts Medical publications

Multi-table data Vendor Replacement Vendor-id Name Address Joining-date part-id ship-id date Orders Part-id Vendor-id Order date Price Parts part-id description importance replacement frequency Ship ship-id name weight capacity Trip-log ship-id trip start date trip length destination Duplicates can be in all of the interlinked tables Goal: resolving them simultaneously could be better

Outline Part I: Motivation, similarity measures (90 min) Data quality, applications Linkage methodology, core measures Learning core measures Linkage based measures Part II: Efficient algorithms for approximate join (60 min) Part III: Clustering/partitioning algorithms (30 min)

String similarity measures Token-based Examples Suitable for large documents Character-based Examples: Jaccard TF-IDF Cosine similarities Edit-distance and variants like Levenshtein, Jaro-Winkler Soundex Suitable for short strings with spelling mistakes Hybrids

Token based Tokens/words Similarity: various measures of overlap of two sets S,T Jaccard(S,T) S T / S T Example ‘AT&T Corporation’ - ‘AT&T’ , ‘Corporation’ S ‘AT&T Corporation’ - ‘AT&T’ , ‘Corporation’ T ‘AT&T Corp’ - ‘AT&T’ , ‘Corp.’ Jaccard(S,T) 1/3 Variants: weights attached with each token Useful for large strings: example web documents

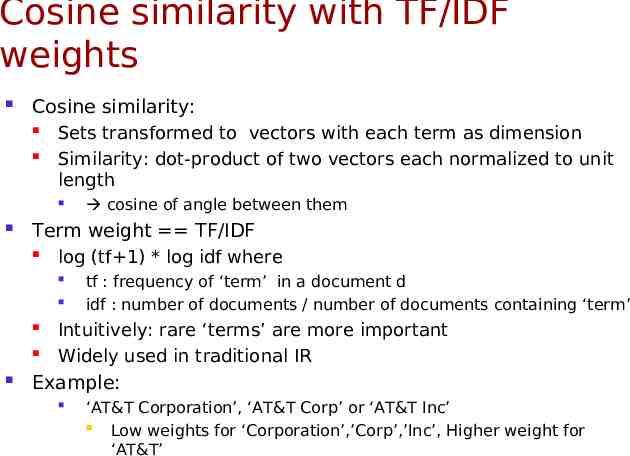

Cosine similarity with TF/IDF weights Cosine similarity: Sets transformed to vectors with each term as dimension Similarity: dot-product of two vectors each normalized to unit length Term weight TF/IDF log (tf 1) * log idf where cosine of angle between them tf : frequency of ‘term’ in a document d idf : number of documents / number of documents containing ‘term’ Intuitively: rare ‘terms’ are more important Widely used in traditional IR Example: ‘AT&T Corporation’, ‘AT&T Corp’ or ‘AT&T Inc’ Low weights for ‘Corporation’,’Corp’,’Inc’, Higher weight for ‘AT&T’

Edit Distance [G98] Given two strings, S,T, edit(S,T): Minimum cost sequence of operations to transform S to T. O(m2) versus O(2m log m) for token-based measures Several variants (gaps,weights) --- becomes NP-complete easily. Example: edit(Error,Eror) 1, edit(great,grate) 2 Folklore dynamic programming algorithm to compute edit(); Character Operations: I (insert), D (delete), R (Replace). Varying costs of operations: can be learnt [RY97]. Observations Suitable for common typing mistakes on small strings Comprehensive vs Comprenhensive Problematic for specific domains

Edit Distance with affine gaps Differences between ‘duplicates’ often due to abbreviations or whole word insertions. Allow sequences of mis-matched characters (gaps) in the alignment of two strings. Penalty: using the affine cost model IBM Corp. closer to ATT Corp. than IBM Corporation John Smith vs John Edward Smith vs John E. Smith Cost(g) s e l s: cost of opening a gap e: cost of extending the gap l: length of a gap Similar dynamic programming algorithm Parameters domain-dependent, learnable, e.g., [BM03, MBP05]

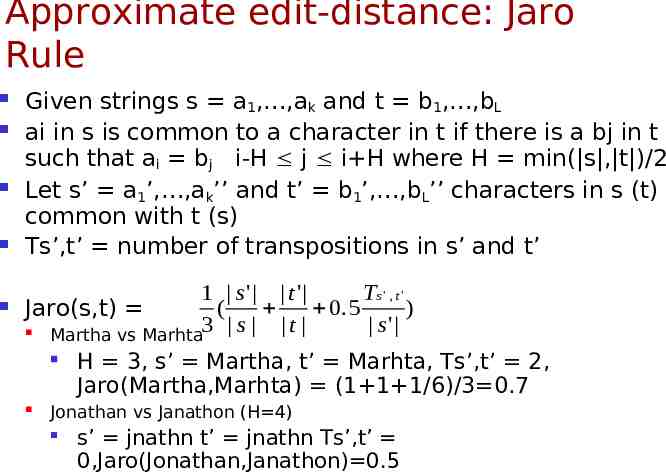

Approximate edit-distance: Jaro Rule Given strings s a1, ,ak and t b1, ,bL ai in s is common to a character in t if there is a bj in t such that ai bj i-H j i H where H min( s , t )/2 Let s’ a1’, ,ak’’ and t’ b1’, ,bL’’ characters in s (t) common with t (s) Ts’,t’ number of transpositions in s’ and t’ 1 s' t ' Ts ' , t ' ( 0 . 5 ) Jaro(s,t) s' Martha vs Marhta3 s t H 3, s’ Martha, t’ Marhta, Ts’,t’ 2, Jaro(Martha,Marhta) (1 1 1/6)/3 0.7 Jonathan vs Janathon (H 4) s’ jnathn t’ jnathn Ts’,t’ 0,Jaro(Jonathan,Janathon) 0.5

Hybrids [CRF03] Example: Edward, John Vs Jon Edwerd Let S {a1, ,aKK}, T {b1, bL} sets of terms: 1 L max Sim(S,T) j 1 sim' (ai, bj) K i 1 Sim’() some other similarity function C(t,S,T) {w S s.t v T, sim’(w,v) t} D(w,T) maxv Tsim’(w,v), w C(t,S,T) sTFIDF W ( w, S ) *W ( w, T ) * D(w, T ) w C ( t , S ,T )

Soundex Encoding A phonetic algorithm that indexes names by their sounds when pronounced in english. Consists of the first letter of the name followed by three numbers. Numbers encode similar sounding consonants. Remove all W, H B, F, P, V encoded as 1, C,G,J,K,Q,S,X,Z as 2 D,T as 3, L as 4, M,N as 5, R as 6, Remove vowels Concatenate first letter of string with first 3 numerals Ex: great and grate become G6EA3 and G6A3E and then G63 More recent, metaphone, double metaphone etc.

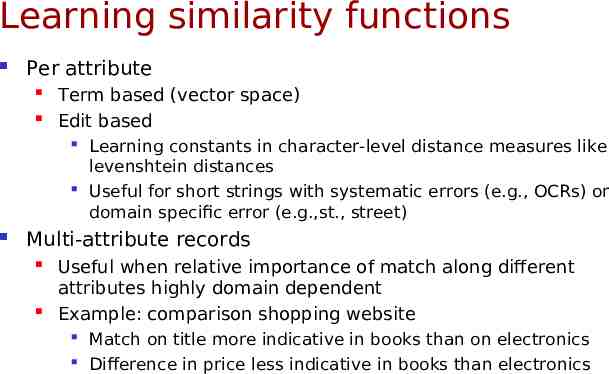

Learning similarity functions Per attribute Term based (vector space) Edit based Learning constants in character-level distance measures like levenshtein distances Useful for short strings with systematic errors (e.g., OCRs) or domain specific error (e.g.,st., street) Multi-attribute records Useful when relative importance of match along different attributes highly domain dependent Example: comparison shopping website Match on title more indicative in books than on electronics Difference in price less indicative in books than electronics

Machine Learning approach Given examples of duplicates and non-duplicate pairs, learn to predict if pair is duplicate or not. Input features: Various kinds of similarity functions between attributes Edit distance, Soundex, N-grams on text attributes Absolute difference on numeric attributes Capture domain-specific knowledge on comparing data

The learning approach Example labeled Similarity functionsYearDifference 1 f1 f2 fn pairs All-Ngrams 0.48 Record 1 D Record 2 1.0 0.4 0.2 1 Record 1 N Record 3 0.0 0.1 0.3 0 Record 4 D Record 5 Non-Duplicate Non Duplicate 0.3 0.4 0.4 1 6 7 8 9 10 11 AuthorTitleNgrams 0.4 ClassifierTitleIsNull 1 PageMatch 0.5 Duplicate Duplicat e AuthorEditDist 0.8 Duplicate Mapped examples Unlabeled list Non-Duplicate Record Record Record Record Record Record Similarit y function s 0.0 1.0 0.6 0.7 0.3 0.0 0.3 0.6 0.1 0.4 0.2 0.1 0.4 0.1 0.8 0.1 0.3 0.2 0.5 0.6 0.4 0.1 0.1 0.5 ? ? ? ? ? ? ? ? 0.0 0.1 0.3 Duplicate 1.0 0.6 0.7 0.3 0.0 0.3 0.6 0.4 0.2 0.1 0.4 0.1 0.8 0.1 0.2 0.5 0.6 0.4 0.1 0.1 0.5 0 1 0 0 1 0 1 1

Experiences with the learning approach Too much manual search in preparing training data Hard to spot challenging and covering sets of duplicates in large lists Even harder to find close non-duplicates that will capture the nuances examine instances that are similar on one attribute but dissimilar on another Active learning is a generalization of this!

The active learning approach Example labeled Similarity functions f1 f2 fn pairs Record 1 D Record 2 1.0 0.4 0.2 1 Record 3 N Record 4 0.0 0.1 0.3 0 Classifier Unlabeled list 0.0 Record Record Record Record Record Record 6 7 8 9 10 11 1.0 0.6 0.7 0.3 0.0 0.3 0.6 0.1 0.4 0.2 0.1 0.4 0.1 0.8 0.1 0.3 0.2 0.5 0.6 0.4 0.1 0.1 0.5 ? ? ? ? ? ? ? ? Active learner 0.7 0.1 0.6 1 0.3 0.4 0.4 0 0.7 0.1 0.6 ? 0.3 0.4 0.4 ?

The ALIAS deduplication system Interactive discovery of deduplication function using active learning Efficient active learning on large lists using novel indexing mechanisms Efficient application of learnt function on large lists using Novel cluster-based evaluation engine Cost-based optimizer

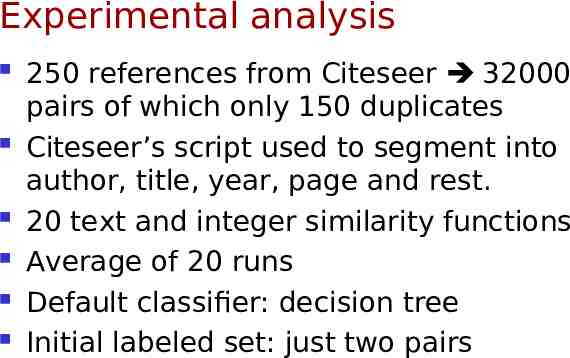

Experimental analysis 250 references from Citeseer 32000 pairs of which only 150 duplicates Citeseer’s script used to segment into author, title, year, page and rest. 20 text and integer similarity functions Average of 20 runs Default classifier: decision tree Initial labeled set: just two pairs

Benefits of active learning Active learning much better than random With only 100 active instances 97% accuracy, Random only 30% Committee-based selection close to optimal

Analyzing selected instances Fraction of duplicates in selected instances: 44% starting with only 0.5% Is the gain due to increased fraction of duplicates? Replaced non-duplicates in selected set with random non-dups Result only 40% accuracy!!!

Finding all duplicate pairs in large lists Input: a large list of records R with string attributes Output: all pairs (S,T) of records in R which satisfy a Similarity Criteria: More complicated similarity functions use these as filters (high recall, low precision) Naïve method: for each record pair, compute similarity score Jaccard(S,T) 0.7 Overlapping tokens (S,T) 5 TF-IDF-Cosine(S,T) 0.8 Edit-distance(S,T) k I/O and CPU intensive, not scalable to millions of records Goal: reduce O(n2) cost to O(n*w), where w n Reduce number of pairs on which similarity is computed

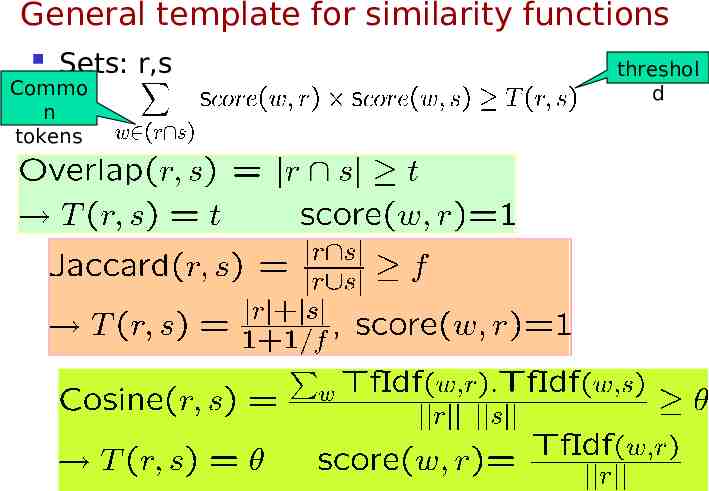

General template for similarity functions Sets: r,s Commo n tokens threshol d

Approximating edit distance [GIJ 01] EditDistance(s,t) d q-grams(s) qgrams(t) max( s , t ) - (d-1)*q – 1 Q-grams (sequence of q-characters in a field) ‘AT&T Corporation’ 3-grams: {‘AT&’,’T&T’,’&T ‘, ‘T C’,’ Co’,’orp’,’rpo’,’por’,’ora’,’rat’,’ati’,’tio’,’ion’} Typically, q 3 Large q-gram sets Approximate large q-gram sets to smaller sets

Pair-Count Step 1: Pass over data, for each token create list of sets that contain it Step 2: generate pairs of sets, count and output those with count T Data 2 Inverted index t1 t2 Self-join lists 2 1 The pair-counting table could be large (Broder et al WWW 1997) too memory intensive Not good when list lengths highly skewed

Probe-Count Step 1: Create inverted index Step 2: Using each record, probe merge lists to find rids in T of them Data Inverted index w1, w4,wk w2 Heap w1

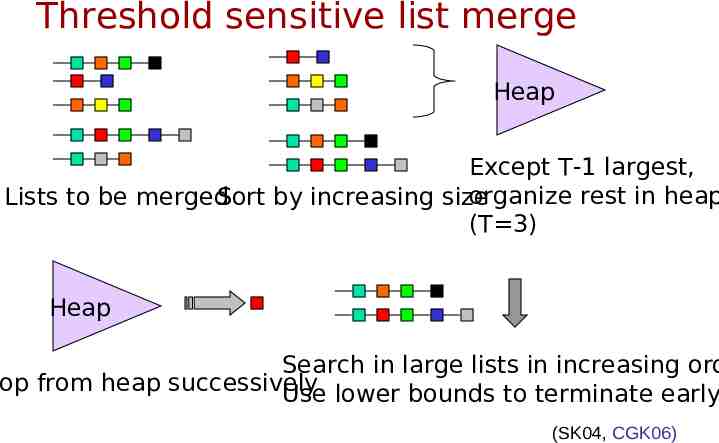

Threshold sensitive list merge Heap Except T-1 largest, organize rest in heap Lists to be merged Sort by increasing size (T 3) Heap Search in large lists in increasing ord Pop from heap successively Use lower bounds to terminate early (SK04, CGK06)

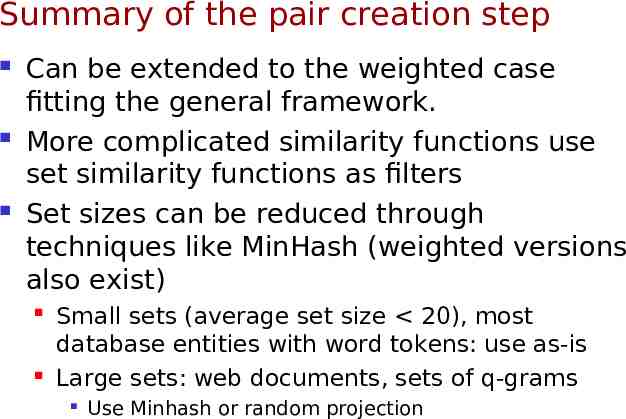

Summary of the pair creation step Can be extended to the weighted case fitting the general framework. More complicated similarity functions use set similarity functions as filters Set sizes can be reduced through techniques like MinHash (weighted versions also exist) Small sets (average set size 20), most database entities with word tokens: use as-is Large sets: web documents, sets of q-grams Use Minhash or random projection

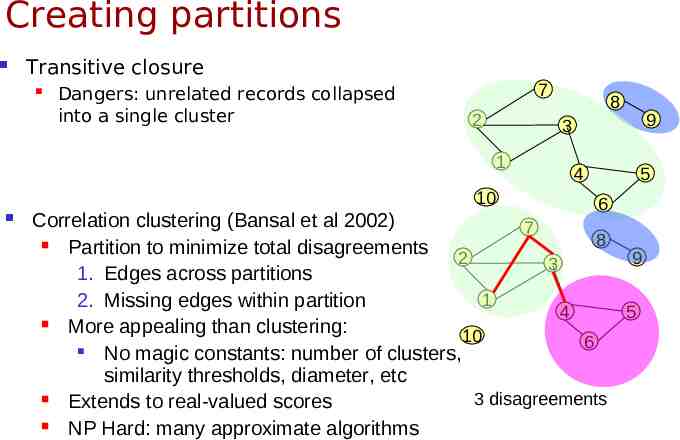

Creating partitions Transitive closure Dangers: unrelated records collapsed into a single cluster 7 2 9 3 1 10 8 4 5 6 Correlation clustering (Bansal et al 2002) 7 8 Partition to minimize total disagreements 2 9 3 1. Edges across partitions 1 2. Missing edges within partition 4 5 More appealing than clustering: 10 6 No magic constants: number of clusters, similarity thresholds, diameter, etc 3 disagreements Extends to real-valued scores NP Hard: many approximate algorithms

Empirical results on data partitioning Digital cameras Camcoder Luggage (From: Bilenko et al, Setup: Online comparison shopping, 2005) Fields: name, model, description, price Learner: Online perceptron learner Complete-link clustering single-link clustering(transitive closure) An issue: when to stop merging clusters

References [CGGM04] Surajit Chaudhuri, Kris Ganjam, Venkatesh Ganti, Rajeev Motwani: Robust and Efficient Fuzzy Match for Online Data Cleaning. SIGMOD Conference 2003: 313-324 [CGG 05] Surajit Chaudhuri, Kris Ganjam, Venkatesh Ganti, Rahul Kapoor, Vivek R. Narasayya, Theo Vassilakis: Data cleaning in microsoft SQL server 2005. SIGMOD Conference 2005: 918-920 [CGK06] Surajit Chaudhuri, Venkatesh Ganti, Raghav Kaushik: A primitive operator for similarity [CRF03] William W. Cohen, Pradeep Ravikumar, Stephen E. Fienberg: A Comparison of String Distance Metrics for Name-Matching Tasks. IIWeb 2003: 73-78 [J89] M. A. Jaro: Advances in record linkage methodology as applied to matching the 1985 census of Tampa, Florida. Journal of the American Statistical Association 84: 414-420. [ME97] Alvaro E. Monge, Charles Elkan: An Efficient Domain-Independent Algorithm for Detecting Approximately Duplicate Database Records. DMKD 1997 [RY97] E. Ristad, P. Yianilos : Learning string edit distance. IEEE Pattern analysis and machine intelligence 1998. [SK04] Sunita Sarawagi, Alok Kirpal: Efficient set joins on similarity predicates. SIGMOD Conference 2004: 743-754 [W99] William E. Winkler: The state of record linkage and current research problems. IRS publication R99/04 (http://www.census.gov/srd/www/byname.html) [Y02] William E. Yancey: BigMatch: A program for extracting probable matches from a large file for record linkage. RRC 2002-01. Statistical Research Division, U.S. Bureau of the Census.