Network Functions Virtualisation Bob Briscoe Chief Researcher BT + Don

13 Slides2.01 MB

Network Functions Virtualisation Bob Briscoe Chief Researcher BT Don Clarke, Pete Willis, Andy Reid, Paul Veitch (BT) further acknowledgements within slides

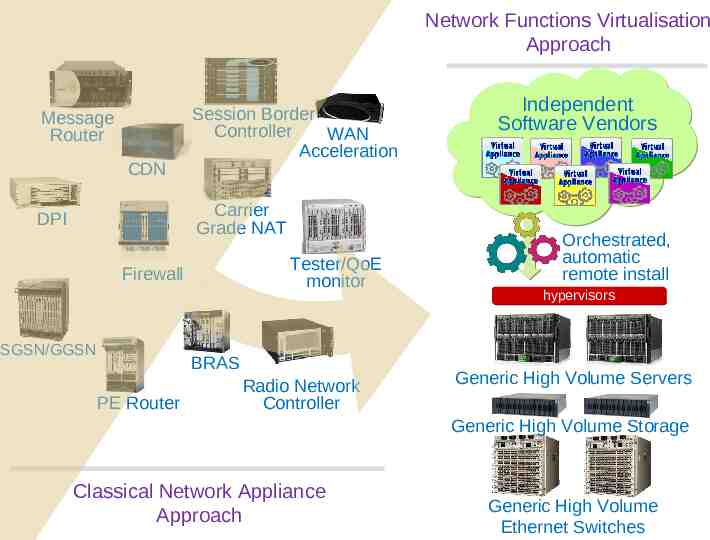

Network Functions Virtualisation Approach Message Router CDN Session Border Controller WAN Acceleration Carrier Grade NAT DPI Tester/QoE monitor Firewall SGSN/GGSN PE Router BRAS Radio Network Controller Independent Software Vendors Orchestrated, automatic remote install hypervisors Generic High Volume Servers Generic High Volume Storage Classical Network Appliance Approach Generic High Volume Ethernet Switches

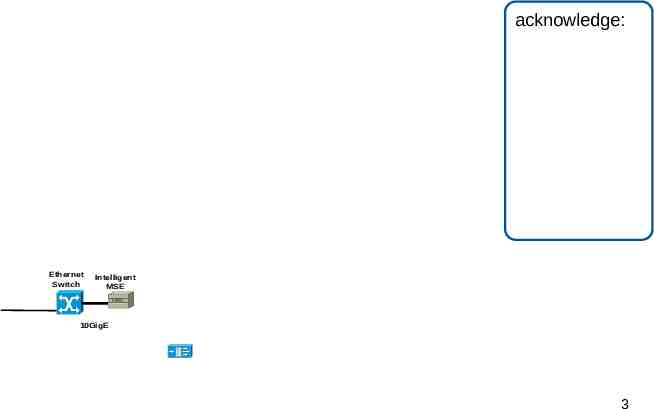

If price-performance is good enough, rapid deployment gains come free Mar’12: Proof of Concept testing Combined BRAS & CDN functions on Intel Xeon Processor 5600 Series HP c7000 BladeSystem acknowledge: using Intel 82599 10 Gigabit Ethernet Controller sidecars – BRAS chosen as an “acid test” – CDN chosen as architecturally complements BRAS BRAS created from scratch so minimal functionality: – PPPoE; only PTA, priority queuing; no RADIUS, VRFs CDN COTS – fully functioning commercial product Systems Management Test Equipment & Traffic Generators Ethernet Switch 10GigE Video Viewing & Internet Browsing Ethernet Switch Intelligent MSE IP VPN Router Intranet server 10GigE Content server PPPoE BRAS & CDN Internet IPoE IPoE Significant management stack : 1.Instantiation of BRAS & CDN modules on bare server 2.Configuration of BRAS & Ethernet switches via Tail-F 3.Configuration of CDN via VVue mgt. sys. 4.Trouble2Resolve via HP mgmt system 3

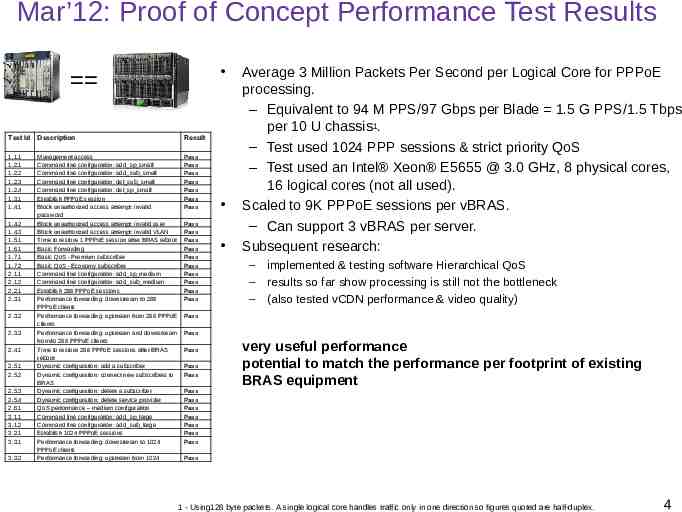

Mar’12: Proof of Concept Performance Test Results Test Id Description Result 1.1.1 1.2.1 1.2.2 1.2.3 1.2.4 1.3.1 1.4.1 Pass Pass Pass Pass Pass Pass Pass 1.4.2 1.4.3 1.5.1 1.6.1 1.7.1 1.7.2 2.1.1 2.1.2 2.2.1 2.3.1 2.3.2 2.3.3 2.4.1 2.5.1 2.5.2 2.5.3 2.5.4 2.6.1 3.1.1 3.1.2 3.2.1 3.3.1 3.3.2 Management access Command line configuration: add sp small Command line configuration: add sub small Command line configuration: del sub small Command line configuration: del sp small Establish PPPoE session Block unauthorized access attempt: invalid password Block unauthorized access attempt: invalid user Block unauthorized access attempt: invalid VLAN Time to restore 1 PPPoE session after BRAS reboot Basic Forwarding Basic QoS - Premium subscriber Basic QoS - Economy subscriber Command line configuration: add sp medium Command line configuration: add sub medium Establish 288 PPPoE sessions Performance forwarding: downstream to 288 PPPoE clients Performance forwarding: upstream from 288 PPPoE clients Performance forwarding: upstream and downstream from/to 288 PPPoE clients Time to restore 288 PPPoE sessions after BRAS reboot Dynamic configuration: add a subscriber Dynamic configuration: connect new subscribers to BRAS Dynamic configuration: delete a subscriber Dynamic configuration: delete service provider QoS performance – medium configuration Command line configuration: add sp large Command line configuration: add sub large Establish 1024 PPPoE sessions Performance forwarding: downstream to 1024 PPPoE clients Performance forwarding: upstream from 1024 Pass Pass Pass Pass Pass Pass Pass Pass Pass Pass Average 3 Million Packets Per Second per Logical Core for PPPoE processing. – Equivalent to 94 M PPS/97 Gbps per Blade 1.5 G PPS/1.5 Tbps per 10 U chassis1. – Test used 1024 PPP sessions & strict priority QoS – Test used an Intel Xeon E5655 @ 3.0 GHz, 8 physical cores, 16 logical cores (not all used). Scaled to 9K PPPoE sessions per vBRAS. – Can support 3 vBRAS per server. Subsequent research: – implemented & testing software Hierarchical QoS – results so far show processing is still not the bottleneck – (also tested vCDN performance & video quality) Pass Pass Pass Pass Pass very useful performance potential to match the performance per footprint of existing BRAS equipment Pass Pass Pass Pass Pass Pass Pass Pass 1 - Using128 byte packets. A single logical core handles traffic only in one direction so figures quoted are half-duplex. 4

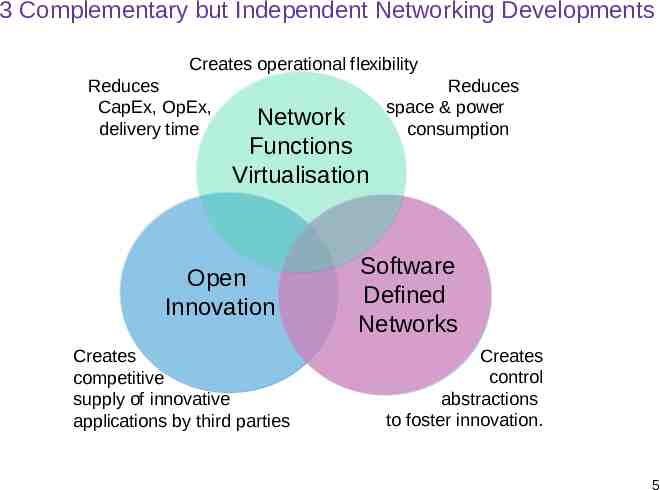

3 Complementary but Independent Networking Developments Creates operational flexibility Reduces CapEx, OpEx, delivery time Network Functions Virtualisation Open Innovation Creates competitive supply of innovative applications by third parties Reduces space & power consumption Software Defined Networks Creates control abstractions to foster innovation. 5

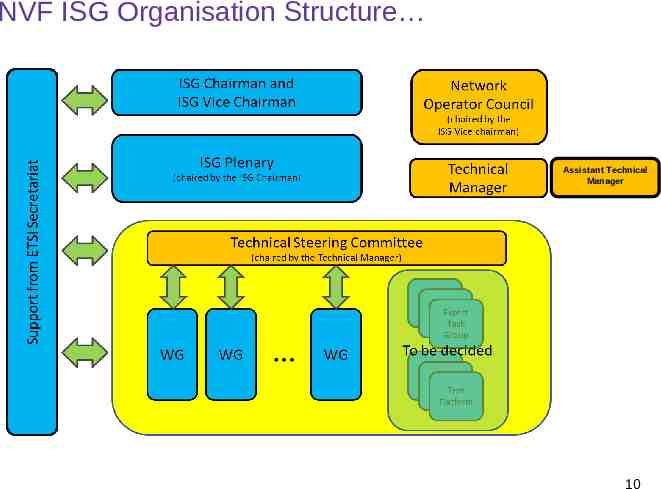

New NfV Industry Specification Group (ISG) First meeting mid-Jan 2013 Network-operator-driven ISG 150 participants 100 attendees from 50 firms Engagement terms –under ETSI, but open to non-members –non-members sign participation agreement – Initiated by 13 carriers shown – Consensus in white paper – Network Operator Council offers requirements – grown to 23 members so far essentially, must declare relevant IPR and offer it under fair & reasonable terms –only per-meeting fees to cover costs Deliverables –White papers identifying gaps and challenges –as input to relevant standardisation bodies ETSI NfV collaboration portal –white paper, published deliverables –how to sign up, join mail lists, etc http://portal.etsi.org/portal/server.pt/community/NFV/367 6

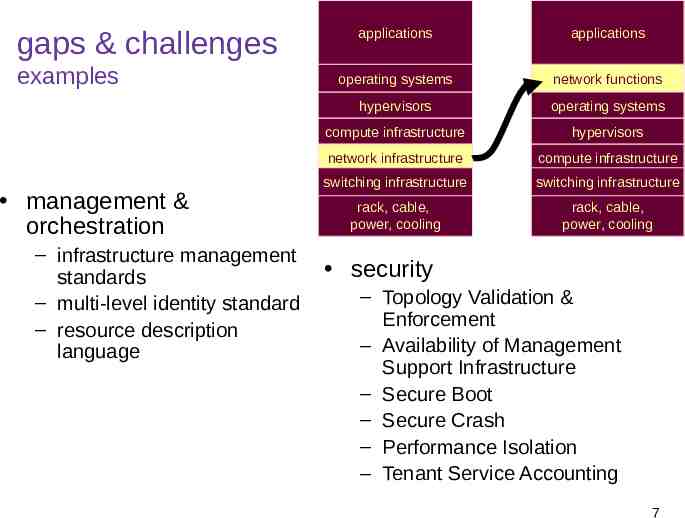

gaps & challenges examples management & orchestration – infrastructure management standards – multi-level identity standard – resource description language applications applications operating systems network functions hypervisors operating systems compute infrastructure hypervisors network infrastructure compute infrastructure switching infrastructure switching infrastructure rack, cable, power, cooling rack, cable, power, cooling security – Topology Validation & Enforcement – Availability of Management Support Infrastructure – Secure Boot – Secure Crash – Performance Isolation – Tenant Service Accounting 7

Q&A and spare slides

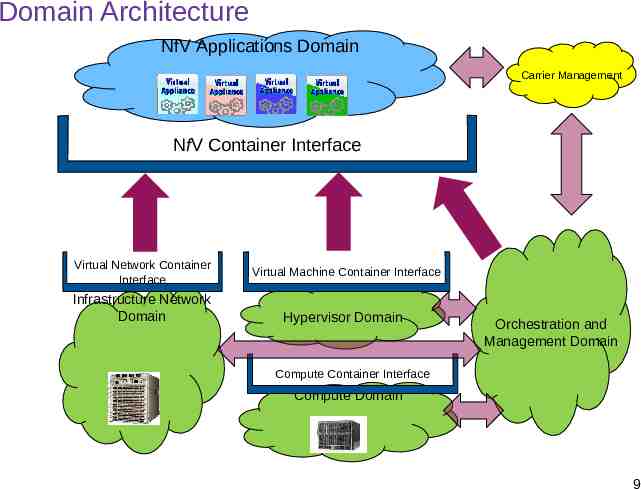

Domain Architecture NfV Applications Domain Carrier Management NfV Container Interface Virtual Network Container Interface Infrastructure Network Domain Virtual Machine Container Interface Hypervisor Domain Orchestration and Management Domain Compute Container Interface Compute Domain 9

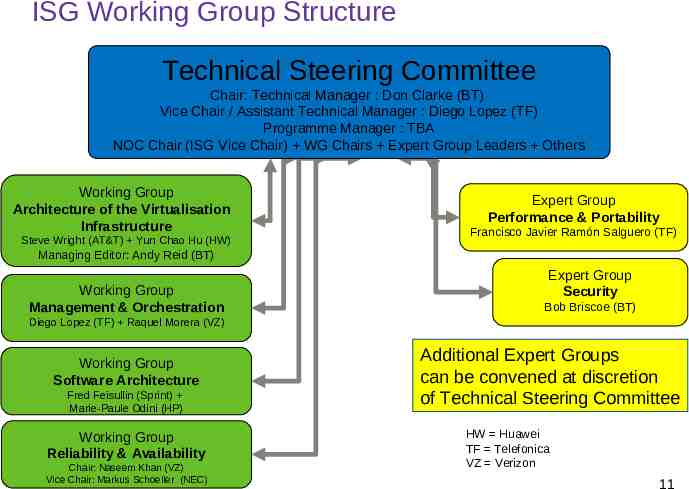

NVF ISG Organisation Structure Assistant Technical Manager 10

ISG Working Group Structure Technical Steering Committee Chair: Technical Manager : Don Clarke (BT) Vice Chair / Assistant Technical Manager : Diego Lopez (TF) Programme Manager : TBA NOC Chair (ISG Vice Chair) WG Chairs Expert Group Leaders Others Working Group Architecture of the Virtualisation Infrastructure Steve Wright (AT&T) Yun Chao Hu (HW) Expert Group Performance & Portability Francisco Javier Ramón Salguero (TF) Managing Editor: Andy Reid (BT) Working Group Management & Orchestration Expert Group Security Bob Briscoe (BT) Diego Lopez (TF) Raquel Morera (VZ) Working Group Software Architecture Fred Feisullin (Sprint) Marie-Paule Odini (HP) Working Group Reliability & Availability Chair: Naseem Khan (VZ) Vice Chair: Markus Schoeller (NEC) Additional Expert Groups can be convened at discretion of Technical Steering Committee HW Huawei TF Telefonica VZ Verizon 11

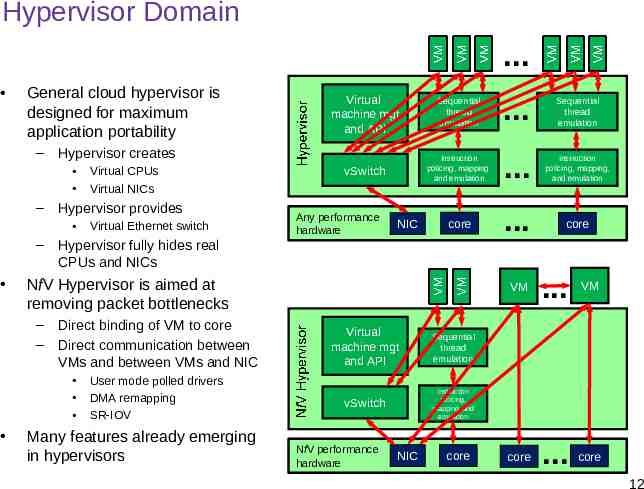

Hypervisor Domain . General cloud hypervisor is designed for maximum application portability – . Sequential thread emulation vSwitch instruction policing, mapping and emulation . instruction policing, mapping, and emulation Virtual Ethernet switch core . core Any performance hardware NIC Hypervisor fully hides real CPUs and NICs NfV Hypervisor is aimed at removing packet bottlenecks – – Direct binding of VM to core Direct communication between VMs and between VMs and NIC Virtual CPUs Virtual NICs Hypervisor provides – Sequential thread emulation Hypervisor creates – Virtual machine mgt and API User mode polled drivers DMA remapping SR-IOV Many features already emerging in hypervisors Virtual machine mgt and API Sequential thread emulation vSwitch instruction policing, mapping and emulation NfV performance hardware NIC core VM . VM core . core 12

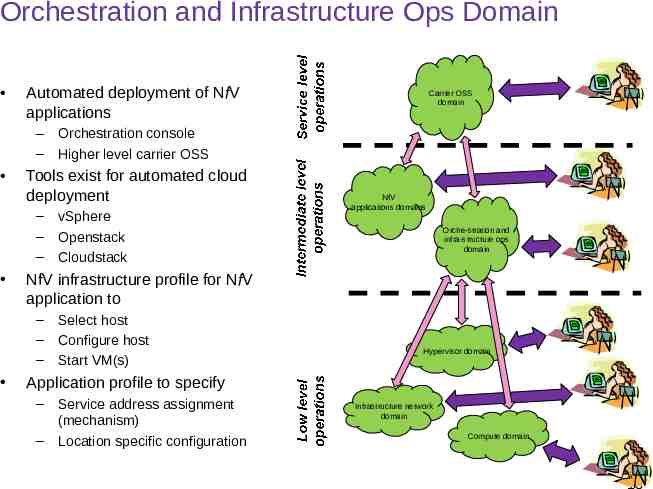

Orchestration and Infrastructure Ops Domain Automated deployment of NfV applications – – vSphere Openstack Cloudstack NfV applications domains Orche-stration and infra-structure ops domain NfV infrastructure profile for NfV application to – – – Orchestration console Higher level carrier OSS Tools exist for automated cloud deployment – – – Carrier OSS domain Select host Configure host Start VM(s) Hypervisor domain Application profile to specify – – Service address assignment (mechanism) Location specific configuration Infrastructure network domain Compute domain 13