Evaluation of Education Development Projects Barb Anderegg, Connie

73 Slides155.50 KB

Evaluation of Education Development Projects Barb Anderegg, Connie Della-Piana, and Russ Pimmel National Science Foundation FIE Conference October 29, 2006

Caution The information in these slides represents the opinions of the individual program offices and not an official NSF position.

Framework for the Session

Framework for the Session Learning situations involve prior knowledge – Some knowledge correct – Some knowledge incorrect (i. e., misconceptions) Learning is – Connecting new knowledge to prior knowledge – Correcting misconception Learning requires – Recalling prior knowledge – actively – Altering prior knowledge

Active-Cooperative Learning Learning activities must encourage learners to: – Recall prior knowledge -- actively, explicitly – Connect new concepts to existing ones – Challenge and alter misconception The think-share-report-learn (TSRL) process addresses these steps

Session Format “Working” session – Short presentations (mini-lectures) – Group exercise Exercise Format – Think Share Report Learn (TSRL) Limited Time – May feel rushed – Intend to identify issues & suggest ideas Get you started No closure -- No “answers” – No “formulas”

Group Behavior Be positive, supportive, and cooperative – Limit critical or negative comments Be brief and concise – No lengthy comments Stay focused – Stay on the subject Take turns as recorder – Report for group not your own ideas

Session Goals The session will enable you to collaborate with evaluation experts in preparing effective project evaluation plans It will not make you an evaluation expert

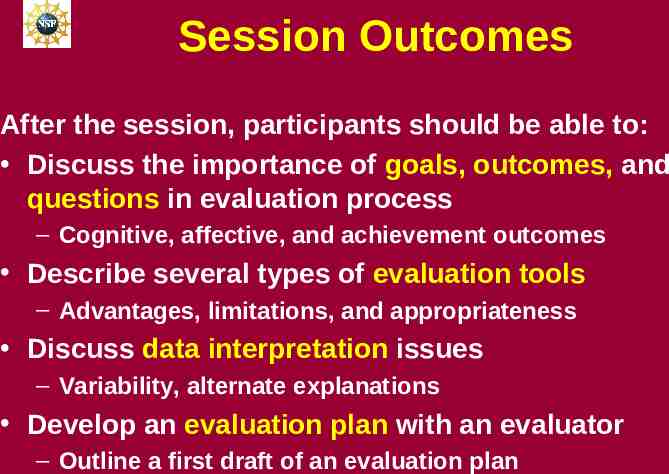

Session Outcomes After the session, participants should be able to: Discuss the importance of goals, outcomes, and questions in evaluation process – Cognitive, affective, and achievement outcomes Describe several types of evaluation tools – Advantages, limitations, and appropriateness Discuss data interpretation issues – Variability, alternate explanations Develop an evaluation plan with an evaluator – Outline a first draft of an evaluation plan

Evaluation and Project Goals/Outcomes/Questions

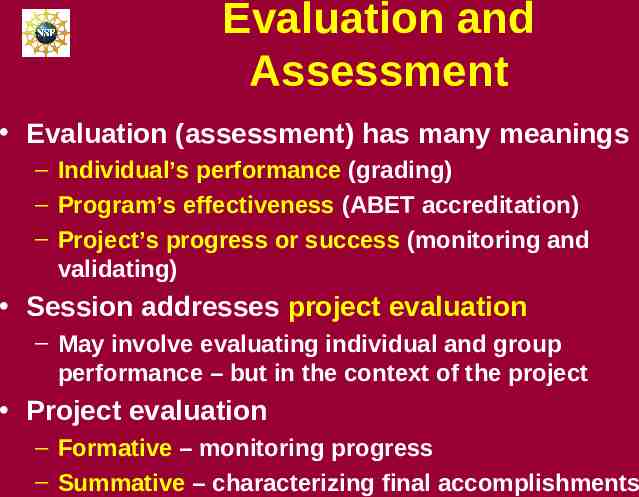

Evaluation and Assessment Evaluation (assessment) has many meanings – Individual’s performance (grading) – Program’s effectiveness (ABET accreditation) – Project’s progress or success (monitoring and validating) Session addresses project evaluation – May involve evaluating individual and group performance – but in the context of the project Project evaluation – Formative – monitoring progress – Summative – characterizing final accomplishments

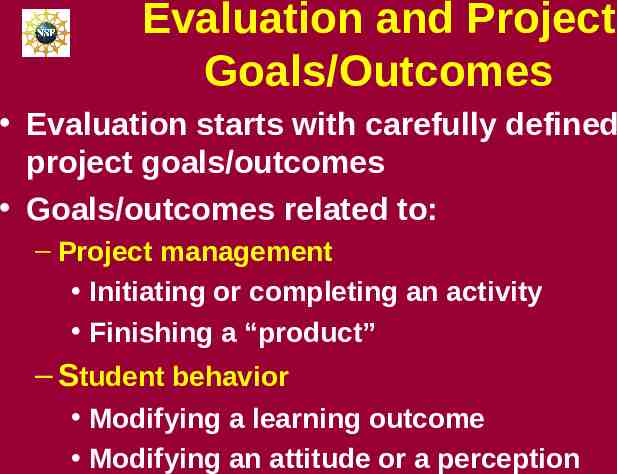

Evaluation and Project Goals/Outcomes Evaluation starts with carefully defined project goals/outcomes Goals/outcomes related to: – Project management Initiating or completing an activity Finishing a “product” – Student behavior Modifying a learning outcome Modifying an attitude or a perception

Developing Goals & Outcomes Start with one or more overarching statements of project intention – Each statement is a goal Convert each goal into one or more specific expected measurable results – Each result is an outcome

Goals – Objectives – Outcomes -- Questions Converting goals to outcomes may involve intermediate steps – Intermediate steps frequently called objectives More specific, more measurable than goals Less specific, less measurable than outcomes Outcomes (goals) lead to questions – These form the basis of the evaluation – Evaluation process collects and interprets data to answer evaluation questions

Definition of Goals, Objectives, and Outcomes Goal – Broad, overarching statement of intention or ambition – A goal typically leads to several objectives Objective – Specific statement of intention – More focused and specific than goal – A objective may lead to one or more outcomes Outcome – Statement of expected result – Measurable with criteria for success NOTE: No consistent definition of these terms

Exercise #1: Identification of Goals/Outcomes Read the abstract – Note - Goal statement removed Suggest two plausible goals – One focused on a change in learning – One focused on a change in some other aspect of student behavior

Abstract The goal of the project is The project is developing computer-based instructional modules for statics and mechanics of materials. The project uses 3D rendering and animation software, in which the user manipulates virtual 3D objects in much the same manner as they would physical objects. Tools being developed enable instructors to realistically include external forces and internal reactions on 3D objects as topics are being explained during lectures. Exercises are being developed for students to be able to communicate with peers and instructors through real-time voice and text interactions. The project is being evaluated by The project is being disseminated through The broader impacts of the project are

PD’s Response -- Goals Goals may focus on – – – – Cognitive behavior Affective behavior Success rates Diversity Cognitive, affective or success in targeted subgroups

PD’s Response – Goals on Cognitive Behavior GOAL: To improve understanding of – Concepts & application in course – Solve textbook problems – Draw free-body diagrams for textbook problems – Describe verbally the effect of external forces on a solid object – Concepts & application beyond course – Solve out-of-context problems – Visualize 3-D problems – Communicate technical problems orally

PD’s Response – Goals on Affective Behavior GOAL: To improve – Interest in the course – Attitude about Profession Curriculum Department – Self- confidence – Intellectual development

PD’s Response – Goals on Success Rates Goals on achievement rate changes – Improve Recruitment rates Retention or persistence rates Graduation rates

PD’s Response – Goals on Diversity GOAL: To increase a target group’s – – – – Understanding of concepts Achievement rate Attitude about profession Self-confidence “Broaden the participation of underrepresented groups”

Exercise #2: Transforming Goals into Outcomes Write one expected measurable outcome for each of the following goals: 1. Increase the students’ understanding of the concepts in statics 1. Improve the students’ attitude about engineering as a career

PD’s Response -Outcomes Conceptual understanding Students will be better able to solve simple conceptual problems that do not require the use of formulas or calculations Students will be better able to solve out-of-context problems. Attitude Students will be more likely to describe engineering as an exciting career The percentage of students who transfer out of engineering after the statics course will decrease.

Exercise #3: Transforming Outcomes into Questions Write a question for these expected measurable outcomes: 1. Students will be better able to solve simple conceptual problems that do not require the use of formulas or calculations 2. In informal discussions, students will be more likely to describe engineering as an exciting career

PD’s Response -Questions Conceptual understanding Did the students’ ability to solve simple conceptual problems increase ? Did the students’ ability to solve simple conceptual problems increase because of the use of the 3D rendering and animation software?

PD’s Response -Questions Attitude Did the students discussions indicate more excitement, about engineering as a career? Did the students discussions indicate more excitement, about engineering as a career because of the use of the 3D rendering and animation software?

Tools for Evaluating Learning Outcomes

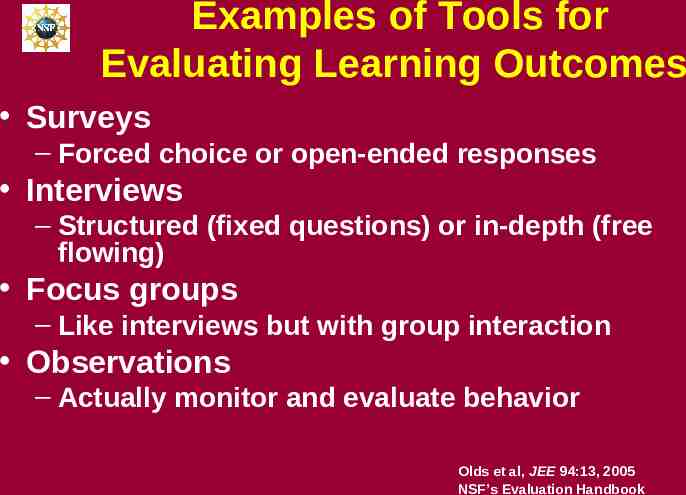

Examples of Tools for Evaluating Learning Outcomes Surveys – Forced choice or open-ended responses Interviews – Structured (fixed questions) or in-depth (free flowing) Focus groups – Like interviews but with group interaction Observations – Actually monitor and evaluate behavior Olds et al, JEE 94:13, 2005 NSF’s Evaluation Handbook

Evaluation Tools Tool characteristics – Advantages and disadvantages – Suitability for some evaluation questions but not for others

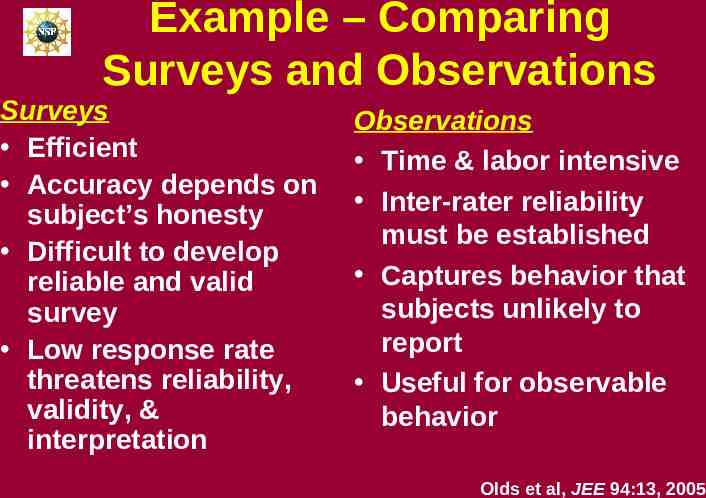

Example – Comparing Surveys and Observations Surveys Efficient Accuracy depends on subject’s honesty Difficult to develop reliable and valid survey Low response rate threatens reliability, validity, & interpretation Observations Time & labor intensive Inter-rater reliability must be established Captures behavior that subjects unlikely to report Useful for observable behavior Olds et al, JEE 94:13, 2005

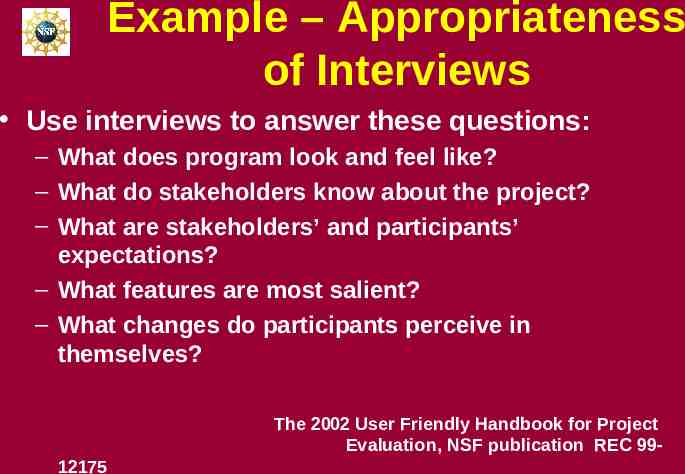

Example – Appropriateness of Interviews Use interviews to answer these questions: – What does program look and feel like? – What do stakeholders know about the project? – What are stakeholders’ and participants’ expectations? – What features are most salient? – What changes do participants perceive in themselves? The 2002 User Friendly Handbook for Project Evaluation, NSF publication REC 9912175

Concept Inventories (CIs)

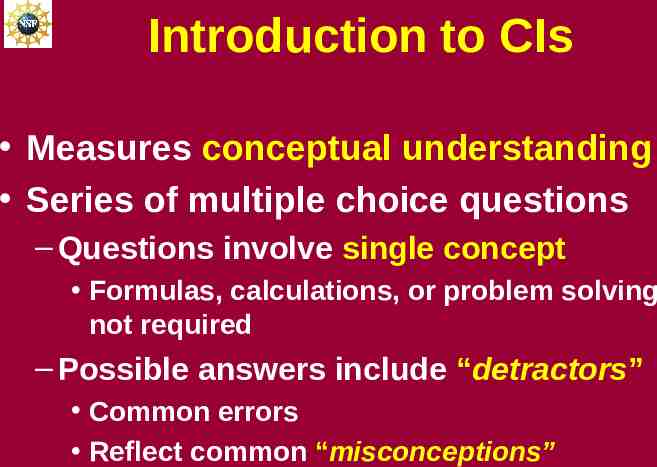

Introduction to CIs Measures conceptual understanding Series of multiple choice questions – Questions involve single concept Formulas, calculations, or problem solving not required – Possible answers include “detractors” Common errors Reflect common “misconceptions”

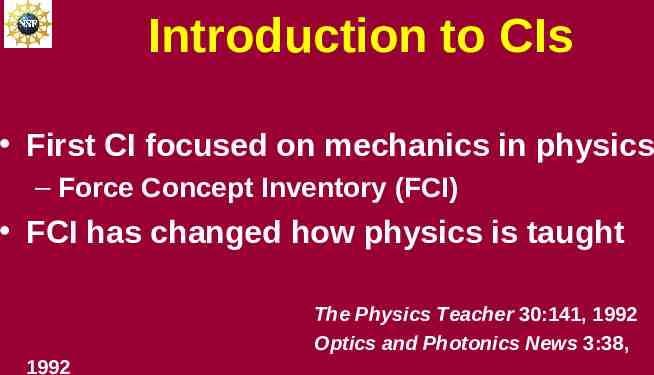

Introduction to CIs First CI focused on mechanics in physics – Force Concept Inventory (FCI) FCI has changed how physics is taught The Physics Teacher 30:141, 1992 Optics and Photonics News 3:38, 1992

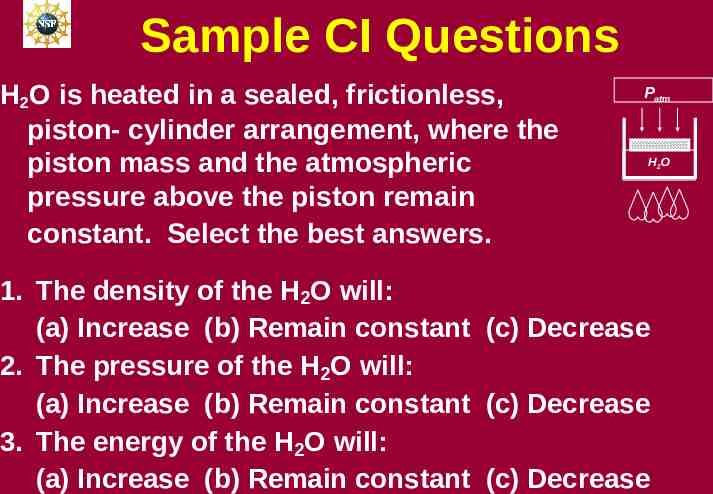

Sample CI Questions H2O is heated in a sealed, frictionless, piston- cylinder arrangement, where the piston mass and the atmospheric pressure above the piston remain constant. Select the best answers. Patm H2O 1. The density of the H2O will: (a) Increase (b) Remain constant (c) Decrease 2. The pressure of the H2O will: (a) Increase (b) Remain constant (c) Decrease 3. The energy of the H2O will: (a) Increase (b) Remain constant (c) Decrease

Other Concept Inventories Existing concept inventories – – – – – Chemistry Statistics Strength of materials Thermodynamics Heat transfer -- Fluid mechanics -- Circuits -- Signals and systems -- Electromagnetic waves -- Etc. Richardson, in Invention and Impact, AAAS, 2004

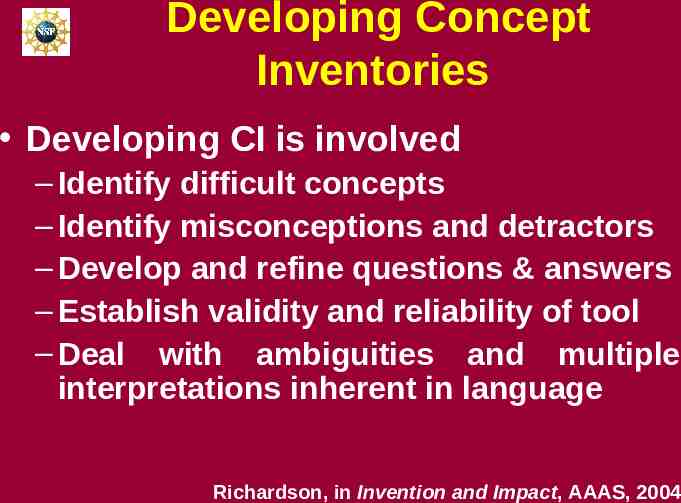

Developing Concept Inventories Developing CI is involved – Identify difficult concepts – Identify misconceptions and detractors – Develop and refine questions & answers – Establish validity and reliability of tool – Deal with ambiguities and multiple interpretations inherent in language Richardson, in Invention and Impact, AAAS, 2004

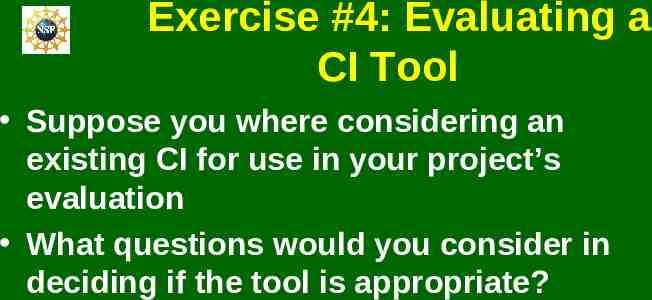

Exercise #4: Evaluating a CI Tool Suppose you where considering an existing CI for use in your project’s evaluation What questions would you consider in deciding if the tool is appropriate?

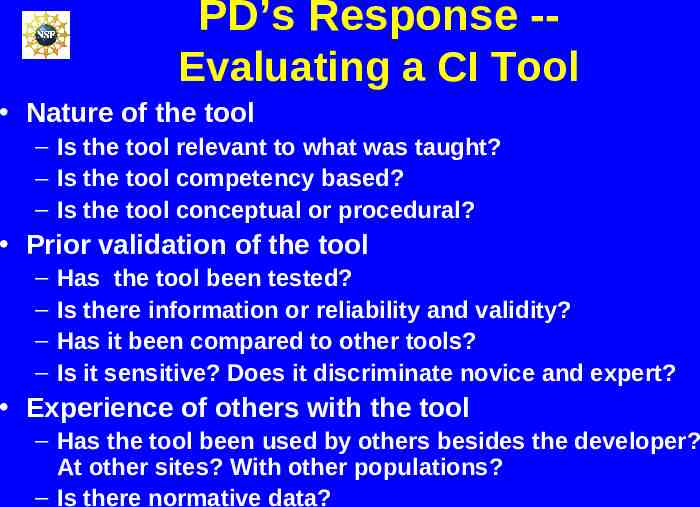

PD’s Response -Evaluating a CI Tool Nature of the tool – Is the tool relevant to what was taught? – Is the tool competency based? – Is the tool conceptual or procedural? Prior validation of the tool – – – – Has the tool been tested? Is there information or reliability and validity? Has it been compared to other tools? Is it sensitive? Does it discriminate novice and expert? Experience of others with the tool – Has the tool been used by others besides the developer? At other sites? With other populations? – Is there normative data?

Tools for Evaluating Affective Factors

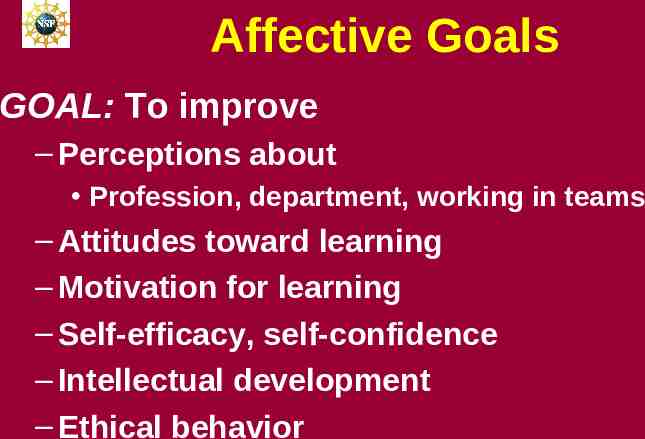

Affective Goals GOAL: To improve – Perceptions about Profession, department, working in teams – Attitudes toward learning – Motivation for learning – Self-efficacy, self-confidence – Intellectual development – Ethical behavior

Exercise #5: Tools for Affective Outcome Suppose your project's outcomes included: 1. Improving perceptions about the profession 2. Improving intellectual development Answer the two questions for each outcome: Do you believe that established, tested tools (i.e., vetted tools) exist? Do you believe that quantitative tools exist?

PD Response -- Tools for Affective Outcomes Both qualitative and quantitative tools exist for both measurements

Assessment of Attitude Example Pittsburgh Freshman Engineering Survey – Questions about perception Confidence in their skills in chemistry, communications, engineering, etc. Impressions about engineering as a precise science, as a lucrative profession, etc. – Forced choices versus open-ended Multiple-choice Besterfield-Sacre et al , JEE 86:37, 1997

Assessment of Attitude – Example (Cont.) Validated using alternate approaches: – Item analysis – Verbal protocol elicitation – Factor analysis Compared students who stayed in engineering to those who left Besterfield-Sacre et al , JEE 86:37, 1997

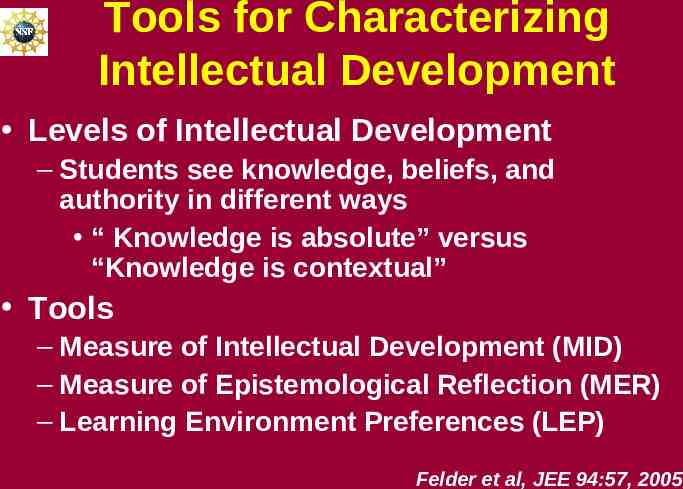

Tools for Characterizing Intellectual Development Levels of Intellectual Development – Students see knowledge, beliefs, and authority in different ways “ Knowledge is absolute” versus “Knowledge is contextual” Tools – Measure of Intellectual Development (MID) – Measure of Epistemological Reflection (MER) – Learning Environment Preferences (LEP) Felder et al, JEE 94:57, 2005

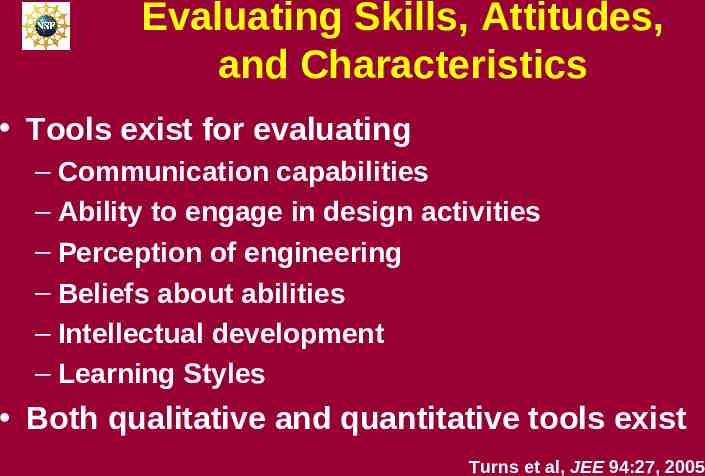

Evaluating Skills, Attitudes, and Characteristics Tools exist for evaluating – Communication capabilities – Ability to engage in design activities – Perception of engineering – Beliefs about abilities – Intellectual development – Learning Styles Both qualitative and quantitative tools exist Turns et al, JEE 94:27, 2005

Interpreting Evaluation Data

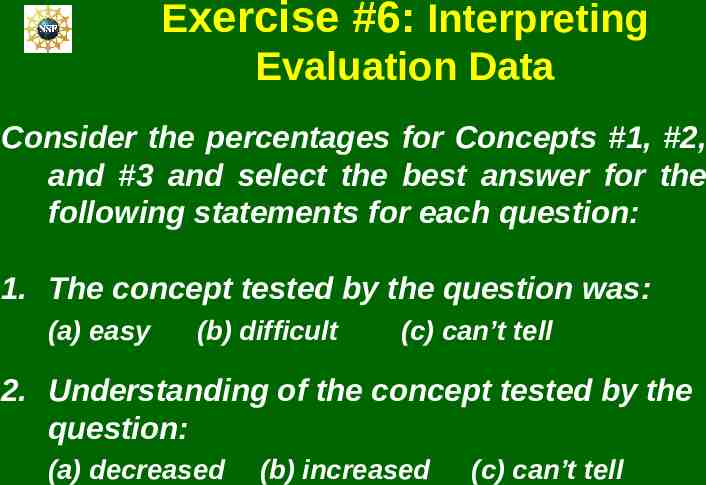

Exercise #6: Interpreting Evaluation Data Consider the percentages for Concepts #1, #2, and #3 and select the best answer for the following statements for each question: 1. The concept tested by the question was: (a) easy (b) difficult (c) can’t tell 2. Understanding of the concept tested by the question: (a) decreased (b) increased (c) can’t tell

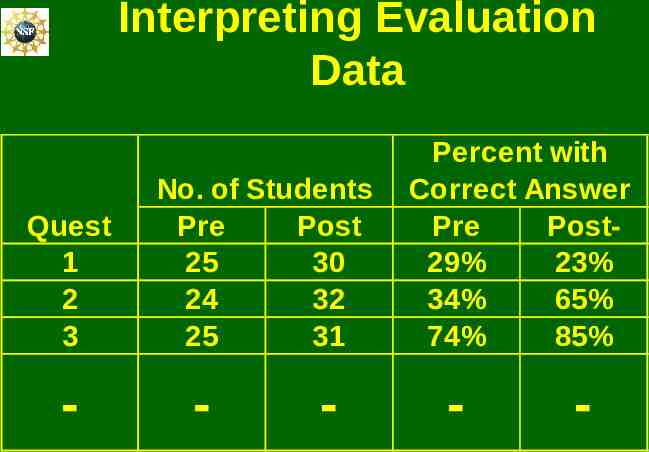

Interpreting Evaluation Data Quest 1 2 3 - No. of Students Pre Post 25 30 24 32 25 31 - - Percent with Correct Answer Pre Post29% 23% 34% 65% 74% 85% - -

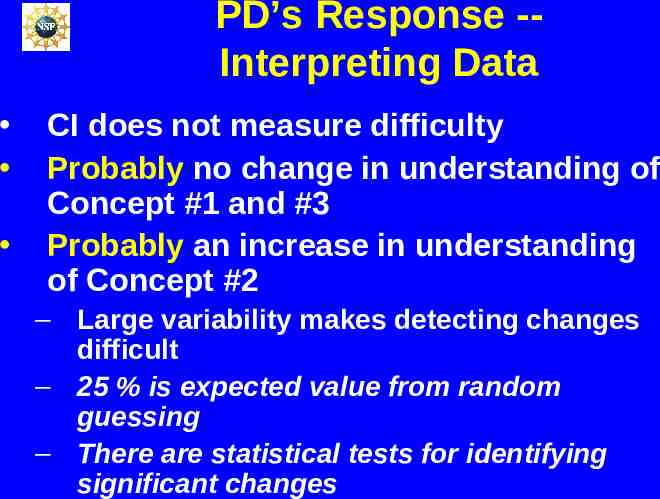

PD’s Response -Interpreting Data CI does not measure difficulty Probably no change in understanding of Concept #1 and #3 Probably an increase in understanding of Concept #2 – Large variability makes detecting changes difficult – 25 % is expected value from random guessing – There are statistical tests for identifying significant changes

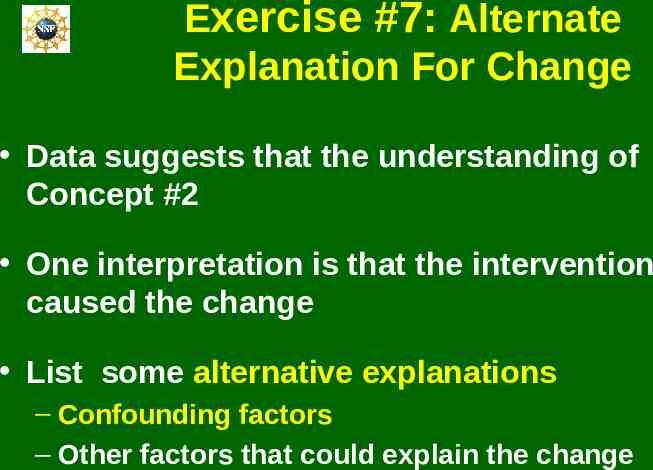

Exercise #7: Alternate Explanation For Change Data suggests that the understanding of Concept #2 One interpretation is that the intervention caused the change List some alternative explanations – Confounding factors – Other factors that could explain the change

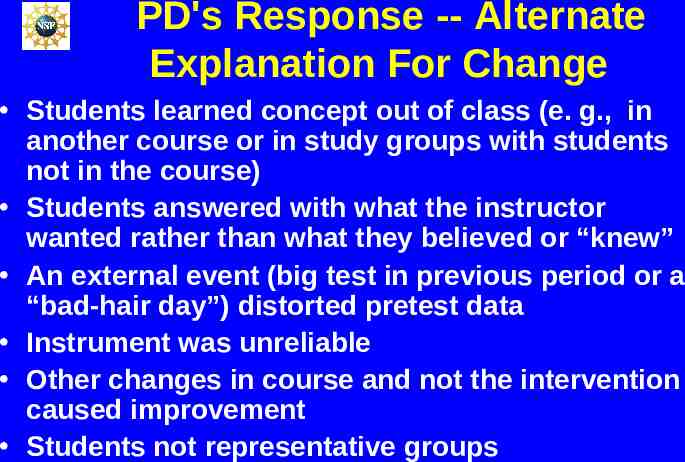

PD's Response -- Alternate Explanation For Change Students learned concept out of class (e. g., in another course or in study groups with students not in the course) Students answered with what the instructor wanted rather than what they believed or “knew” An external event (big test in previous period or a “bad-hair day”) distorted pretest data Instrument was unreliable Other changes in course and not the intervention caused improvement Students not representative groups

Exercise #8: Alternate Explanation for Lack of Change Data suggests that the understanding of Concept #1 did not increase One interpretation is that the intervention did cause a change but it was masked by other factors List some confounding factors that could have masked a real change

PD's Response -- Alternate Explanations for Lack of Effect An external event (big test in previous period or a “bad-hair day”) distorted post-test data The instrument was unreliable Implementation of the intervention was poor Population too small One or both student groups not representative Formats were different on pre and post tests

Evaluation Plan

Exercise #9: Evaluation Plan Suppose that a project’s goals are to improve: 1. The students’ understanding of the concepts in statics 2. The students’ attitude about engineering as a career List the topics that you would address in the evaluation plan

Evaluation Plan -- PD’s Responses Name & qualifications of the evaluation expert Goals and outcomes and evaluation questions Tools & protocols for evaluating each outcome Analysis & interpretation procedures Confounding factors & approaches for minimizing their impact Formative evaluation techniques for monitoring and improving the project as it evolves Summative evaluation techniques for characterizing the accomplishments of the completed project.

Working With an Evaluator

What Your Evaluation Can Accomplish Provide reasonably reliable, reasonably valid information about the merits and results of a particular program or project operating in particular circumstance Generalizations are tenuous Evaluation – Tells what you accomplished Without it you don’t know – Gives you a story (data) to share

Perspective on Project Evaluation Evaluation is complicated & involved – Not an end-of-project “add-on” Evaluation requires expertise Get an evaluator involved EARLY – In proposal writing stage – In conceptualizing the project

Finding an Evaluator Other departments – education, educational psychology, psychology, administration, sociology, anthropology, science or mathematics education, engineering education Campus teaching and learning center Colleagues and researchers Professional organizations Independent consultants NSF sessions or projects Question: Internal or external evaluator?

Exercise #10: Evaluator Questions List two or three questions that an evaluator would have for you as you begin working together on an evaluation plan.

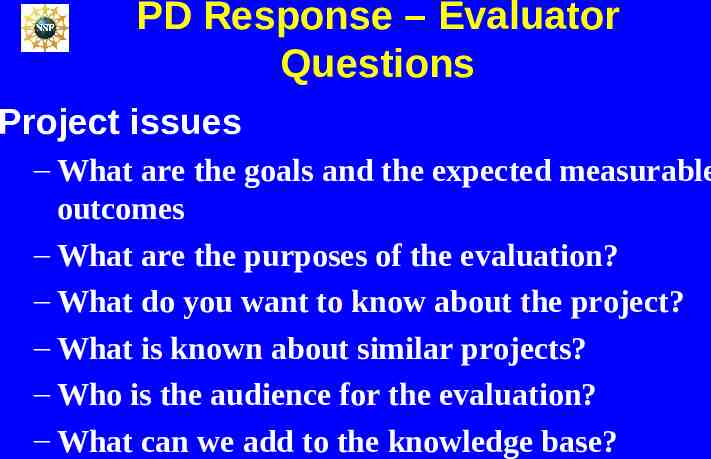

PD Response – Evaluator Questions Project issues – What are the goals and the expected measurable outcomes – What are the purposes of the evaluation? – What do you want to know about the project? – What is known about similar projects? – Who is the audience for the evaluation? – What can we add to the knowledge base?

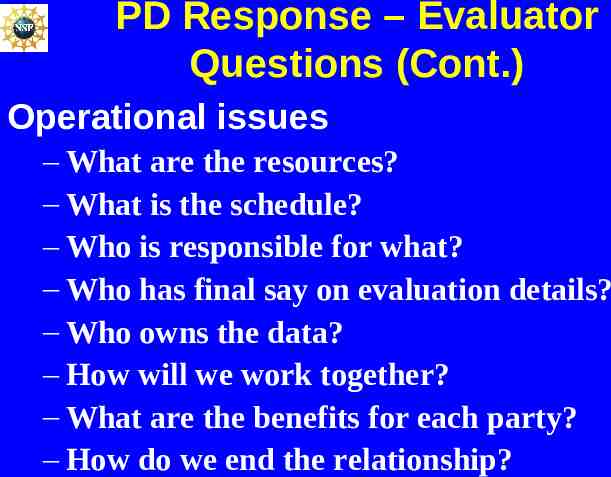

PD Response – Evaluator Questions (Cont.) Operational issues – What are the resources? – What is the schedule? – Who is responsible for what? – Who has final say on evaluation details? – Who owns the data? – How will we work together? – What are the benefits for each party? – How do we end the relationship?

Preparing to Work With An Evaluator Become knowledgeable – Draw on your experience – Talk to colleagues Clarify purpose of project & evaluation – Project’s goals and outcomes – Questions for evaluation – Usefulness of evaluation Anticipate results – Confounding factors

Working With Evaluator Talk with evaluator about your idea (from the start) – Share the vision Become knowledgeable – Discuss past and current efforts Define project goals, objectives and outcomes – Develop project logic Define purpose of evaluation – Develop questions – Focus on implementation and outcomes – Stress usefulness

Culturally Responsive Evaluations Cultural differences can affect evaluations Evaluations should be done with awareness of cultural context of project Evaluations should be responsive to – Racial/ethnic diversity – Gender – Disabilities – Language

Working With Evaluator (Cont) Anticipate results – List expected outcomes – Plan for negative findings – Consider possible unanticipated positive outcomes – Consider possible unintended negative consequences Interacting with evaluator – Identify benefits to evaluator (e.g. career goals) – Develop a team-orientation – Assess the relationship

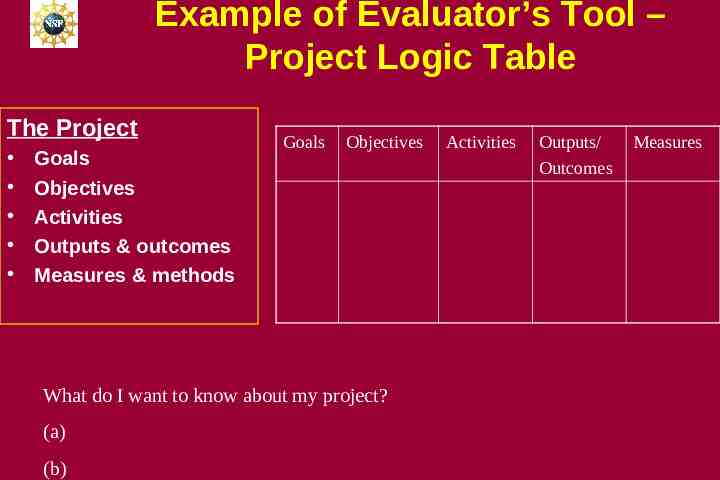

Example of Evaluator’s Tool – Project Logic Table The Project Goals Objectives Activities Outputs & outcomes Measures & methods Goals Objectives What do I want to know about my project? (a) (b) Activities Outputs/ Outcomes Measures

Human Subjects and the IRB Projects that collect data from or about students or faculty members involve human subjects Institution must submit one of these – Results from IRB review on proposal’s coversheet – Formal statement from IRB representative declaring the research exempt Not the PI – IRB approval form See “Human Subjects” section in GPG NSF Grant Proposal Guide (GPG)

Other Sources NSF’s User Friendly Handbook for Project Evaluation – http://www.nsf.gov/pubs/2002/nsf02057/start.htm Online Evaluation Resource Library (OERL) – http://oerl.sri.com/ Field-Tested Learning Assessment Guide (FLAG) – http://www.wcer.wisc.edu/archive/cl1/flag/default.asp Science education literature